Chapter 7 Working on remote servers

7.1 Accessing remote computers

The primary protocol for accessing remote computers in this day and age

is ssh which stands for “Secure Shell.” In the protocol, your computer

and the remote computer talk to one another and then choose to have a “shared

secret” which they can use as a key to encrypt data traffic from one to the other.

The amazing thing is that the two computers can actually tell each other what

that shared secret is by having a conversation “in the open” with one another.

That is a topic for another day, but if you are interested, you could

read about it here.

At any rate, the SSH protocal allows for secure access to a remote server. It involves using a username and a password, and, in many cases today, some form of two-factor authentication (i.e., you need to have your phone involved, too!). Different remote servers have different routines for logging in to them, and they are also all configured a little differently. The main servers we are concerned about in these teaching materials are:

- The Hummingbird cluster at UCSC, which is accessible by anyone with a UCSC blue username/password.

- The Alpine Supercomputer at CU Boulder which is accessible by all graduate students and faculty at CSU.

- The Sedna cluster housed at the National Marine Fisheries Service, Northwest Fisheries Science Center. This is accessible only by those Federal NMFS employees whom have been granted access.

Happily, all of these systems use SLURM for job scheduling (much more about that in the next chapter); however are a few vagaries to each of these systems that we will cover below.

7.1.1 Windows

If you are on a Windows machine, you can use the ssh utility from your Git Bash shell, but

that is a bit of a hassle from RStudio. And a better terminal emulator is available if you

are going to be accessing remote computers. It is recommended that you install and

use the program PuTTy. The steps are pretty self-explanatory

and well documented. Instead of using ssh on a command line you put a host name into

a dialog box, etc.

WHOA! I’m not a Windows person, but I just Matthew Hopken working on Windows using MobaXterm to connect to the server and it looks pretty nice.

7.1.2 Hummingbird

Directions for UCSC students and staff to login to Hummingbird are available at https://www.hb.ucsc.edu/getting-started/. If you are not on the UCSC campus network, you need to use the UCSC VPN to connect.

By default, this cluster uses tcsh for a shell rather than bash. To keep things

consistent with what you have learned about bash, you will want to automatically switch

to bash upon login. You can do this by adding a file ~/.tcshrc whose contents are:

Then, configure your bash environment with your ~/.bashrc and ~/.bash_profile as

described in Chapter 4.5.

The tmux settings (See section 7.4) in hummingbird are a little messed up as well, making

it hard to set window names that don’t get changed the moment you make another command. Therefore,

you must make a file called ~/.tmux.conf and put this line in it:

set-option -g allow-rename off7.1.3 Alpine

To get an account on the CU Boulder computing resources (which includes Alpine), see https://www.acns.colostate.edu/hpc/summit-get-started/. Account creation is automatic for graduate students and faculty. This setup requires that you get an app called Duo on your phone for doing two-factor authentication.

Instructions for logging into Summit are at https://www.acns.colostate.edu/hpc/#remote-login.

On your local machine (i.e., laptop), you might consider adding an alias to your

.bashrc that will let you type summit to issue the login command. For example:

where you replace csu_eid with your actual CSU eID.

7.2 Transferring files to remote computers

7.2.1 sftp and several systems that use it

Most Unix systems have a command called scp, which works like cp, but which is

designed for copying files to and from

remote servers using the SSH protocol for security. This works really well

if you have set up a public/private key pair to allow SSH access to your server

without constantly having to type in your password. Use of public-private keypairs is unfortunately, not

an option (as far as I can tell) on new NSF-funded clusters that use 2-factor authentication (like SUMMIT

at CU Boulder). Trying to use scp in such a context becomes an endless cycle of

entering your password and checking your phone for a DUO push. Fortunately, there are

alternatives.

7.2.1.1 Vanilla sftp

The administrators of the SUMMIT supercomputer at CU Boulder recommend

the sftp utility for transferring files from your laptop to the server.

This works reasonably well. The syntax for a CSU student or affiliate connecting to the server is

sftp csu_userEID@colostate.edu@login.rc.colorado.edu

# for example, here is mine:

sftp eriq@colostate.edu@login.rc.colorado.eduAfter doing this you have to give your eID password followed by ,push, and then

approve the DUO push request on your phone. Once that is done, you have a “line open”

to the server and can use the commands of sftp to transfer files around.

However, the vanilla version of sftp (at least on a Mac) is unbelievably limited,

because there is simply no good support for TAB completion within

the utility for navigating directories on the server or upon your laptop.

It must have been developed by troglodytes…consequently, I won’t describe

vanilla sftp further.

7.2.1.2 Windows alternatives

If you are on Windows, it looks like the makers of PuTTY also bring you PSFTP which might be useful for you for file transfer. Even better, MobaXterm has native GUI file transfer capabilities. Go for it!

7.2.1.3 A GUI solution for Mac or Windows

When you are first getting started transfering files to a server, it might be easiest to use a graphical user interface. There is a decently-supported (and freely available) application called FileZilla, that does this. You can download the FileZilla client application appropriate for your operating system (note! you download and install this on your own laptop not the server) from https://filezilla-project.org/download.php?type=client.

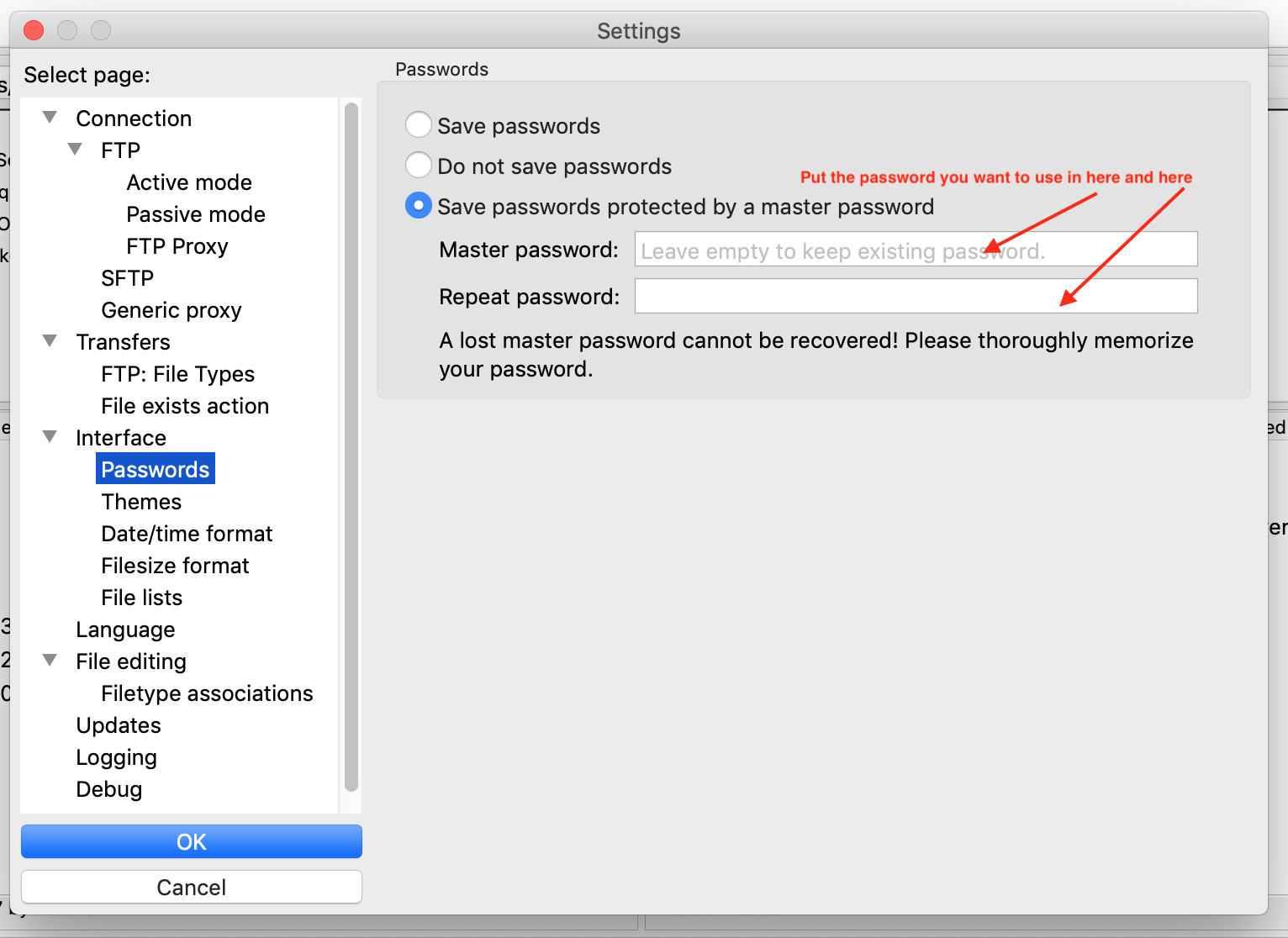

Once you install it, there are a few configurations to be done. First, go toEdit->Settings and activate

and give a master password to protect your passwords. This master password should be something that

you will remember easily. It does not have to be, and, really, should not be, the same as your Summit password.

FIGURE 7.1: Setting FileZilla’s master password

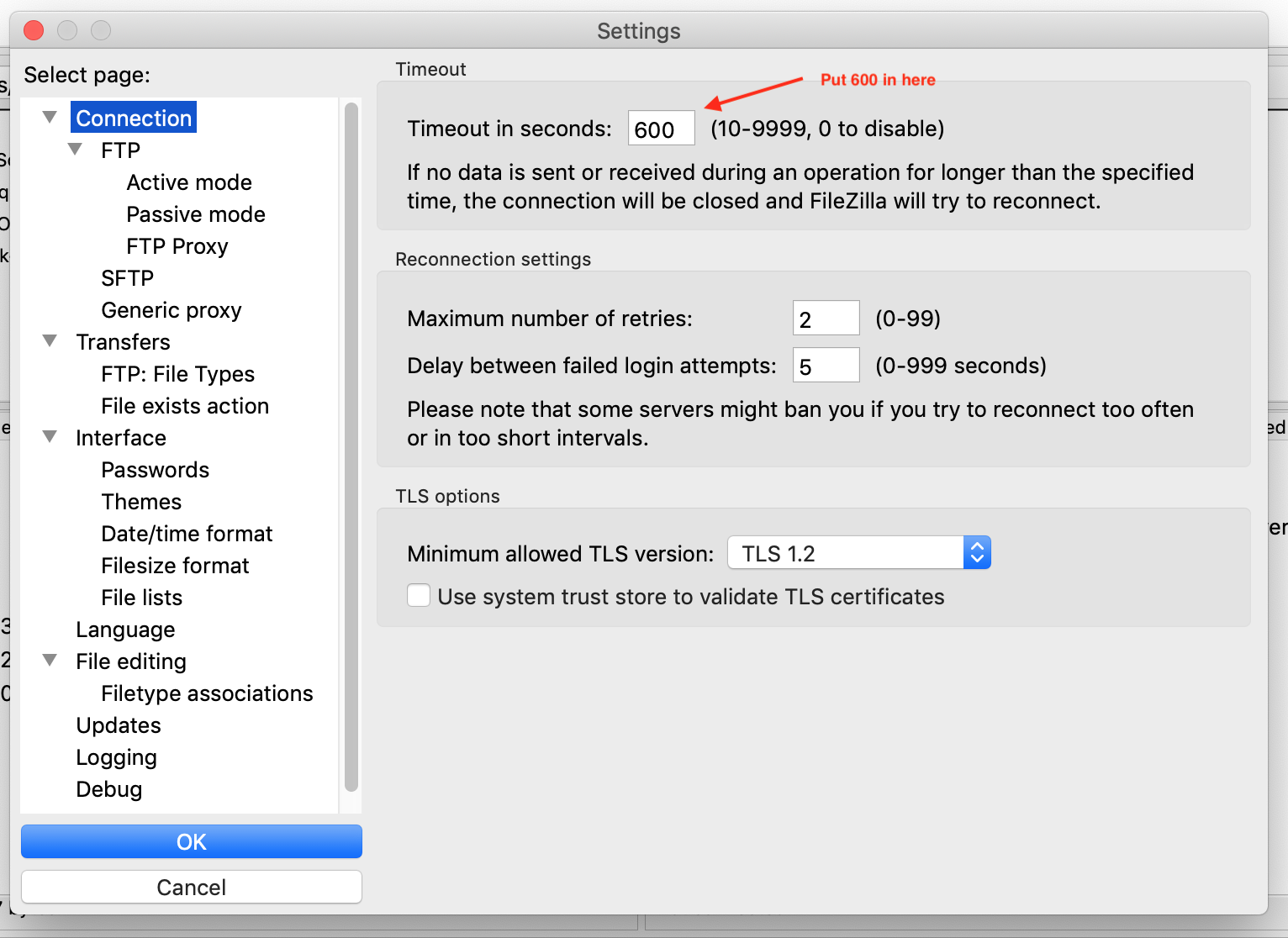

Edit->Settings request

FIGURE 7.2: Setting FileZilla’s master password

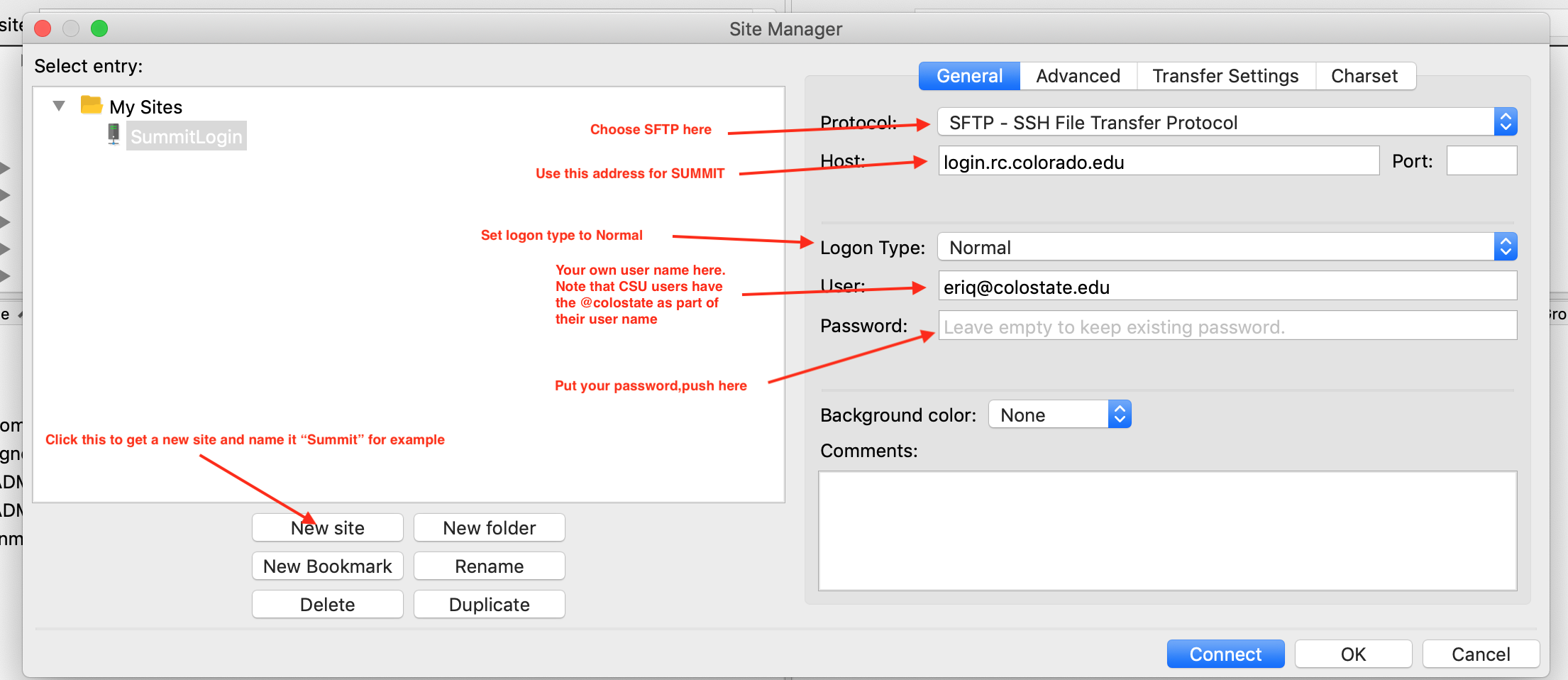

File->Site Manager and set up a connection to your remote machine.

For SUMMIT, do like this:

FIGURE 7.3: Setting FileZilla’s master password

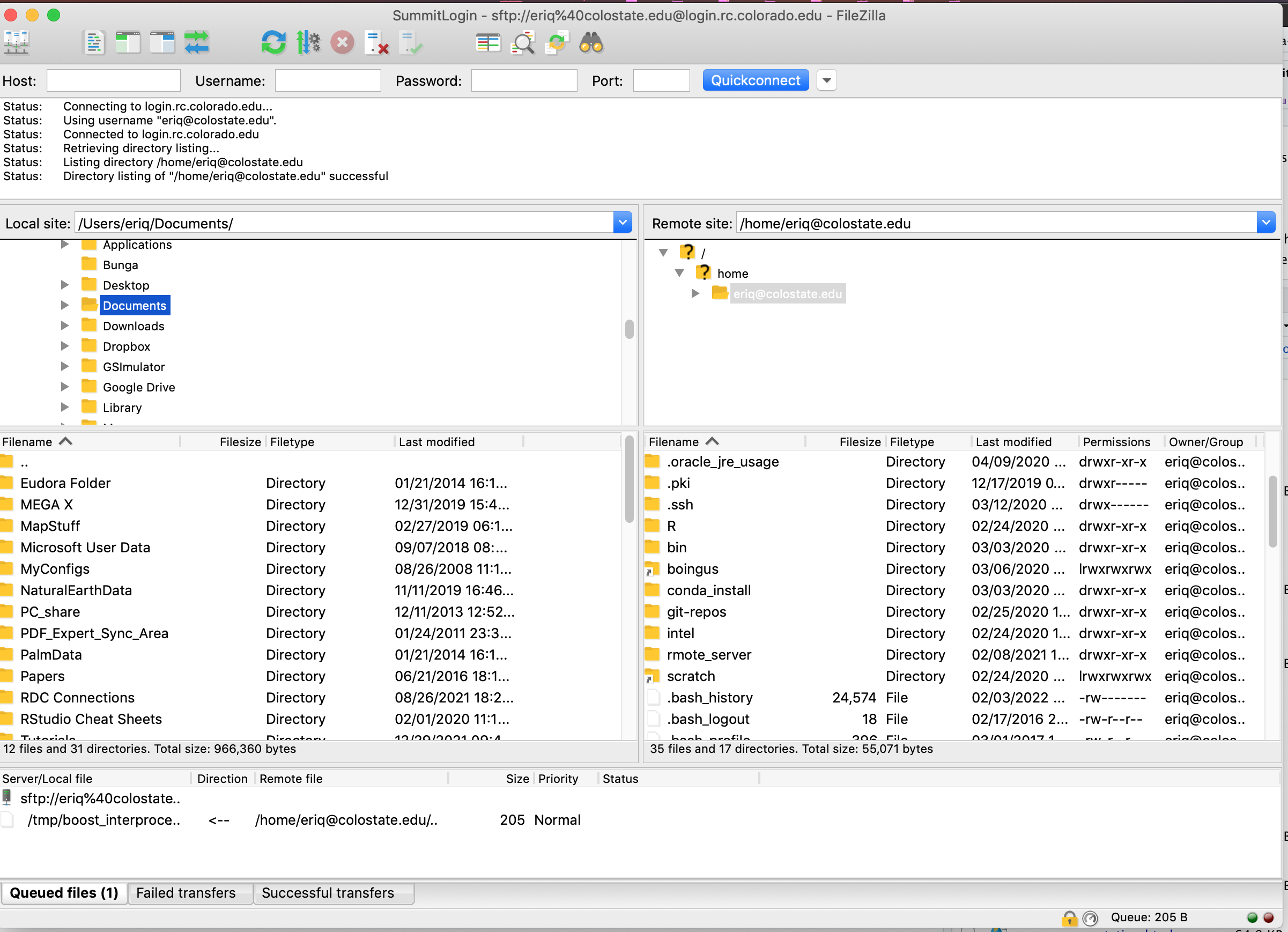

After you hit OK and have established this site, you can do File->Site Manager, then choose

your Summit connection in the left pane and hit “connect” to connect to Summit. You may have to

type in the “Master Password” that you gave to FileZilla.

After connecting, you have two file-browser panes. The one on your left is typically your local computer, and the one on the right is the server (remote computer). You can change the local or remote directory by clicking in either the left or right pane, and files and folders by dragging and dropping. The setup looks like this:

FIGURE 7.4: Setting FileZilla’s master password

7.2.1.4 lftp

If you are on a Mac, you can install lftp (brew install lftp: note that I need to write

a section about installing command line utilities via homebrew somewhere in this handbook).

lftp provides the sort of TAB completion of paths that you, by now, will have come to

know and love and expect.

Before you connect to your server with lftp there are a few customizations that you will

want to do in order to get nicely colored output, and to avoid having to login repeatedly

during your lftp session. You must make a file on your laptop called ~/.lftprc and put

the following lines in it:

set color:dir-colors "rs=0:di=01;36:fi=01;32:ln=01;31:*.txt=01;35:*.html=00;35:"

set color:use-color true

set net:idle 5h

set net:timeout 5hNow, to connect to SUMMIT with lftp, you use this syntax (shown for my username):

That can be a lot to type, so I would recommend putting something this in your

.bashrc:

so you can just type summit_ftp (which will TAB complete…) to launch that command.

After you issue that command, you put in your password (on SUMMIT, followed by ,push). lftp then caches your

password, and will re-issue it, if necessary, to execute commands. It doesn’t actually send your

password until you try a command like cls. On the SUMMIT system, with the default lftp settings,

after 3 minutes of idle time, when you issue an sftp command on the server, you will have to approve

access with the DUO app on your phone again. However, the line last two lines in the

~/.lftprc file listed above ensure that your connection to SUMMIT will stay active even

through 5 hours of idle time, so you don’t have to keep clicking DUO pushes on your phone.

After 5 hours, if you try issuing a command to the server in lftp, it will use your cached

password to reconnect to the server. On SUMMIT, this means that you only need to deal with

approving a DUO push again—not re-entering your password. If you are working on SUMMIT daily,

it makes sense to just keep one Terminal window open, running lftp, all the time.

Once you have started your lftp/sftp session this way, there are some important things to keep in mind.

The most important of which is that the lftp session you are in maintains a current working directory

on both the server and on your laptop. We will call these the server working directory and

the laptop working directory, respectively, (Technically, we ought to call the laptop working directory the client working directory

but I find that is confusing for people, we we will stick with laptop.)

There are two different commands to see what each

current working directory is:

pwd: print the server working directorylpwd: print laptop working directory (the precedinglstands for local).

If you want to change either the server or the laptop current working directory you use:

cdpath : change the server working directory to pathlcdpath : change the laptop working directory to path.

Following lcd, TAB-completion is done for paths on the laptop, while following

cd, TAB-completion is done for paths on the server.

If you want to list the contents of the different directories on the servers you use:

cls: list things in the server working directory, orclspath : list things in path on the server.

Note that cls is a little different than the ls command that comes

with sftp. The latter command always prints in long format and does not play

nicely with colorized output. By contrast, cls is part of lftp and it

behaves mostly like your typical Unix ls command, taking options like -a, -l and -d, and

it will even do cls -lrt. Type help cls at the lftp prompt for more information.

If you want to list the contents of the different directories on your laptop, you

use ls but you preface it with a !, which means “execute the following on my

laptop, not the server.” So, we have:

!ls: list the contents of the laptop working directory.!lspath : list the contents of the laptop path path.

When you use the ! at the beginning of the line, then all the TAB completion occurs

in the context of the laptop current working directory. Note that with the !

you can do all sorts of typical shell commands on your laptop from within the lftp

session. For example !mkdir this_on_my_laptop or !cat that_file, etc.

If you wish to make a directory on the server, just use mkdir. If you wish to

remove a file from the server, just use rm. The latter works much like it does in

bash, but does not seem to support globbing (use mrm for that!) In fact, you can

do a lot of things (like cat and less) on the server

as if you had a bash shell running on it through an

SSH connection. Just type those commands at the lftp prompt.

7.2.1.5 Transferring files using lftp

To this point, we haven’t even talked about our original goal with lftp, which

was to transfer files from our laptop to the server or from the server to our laptop.

The main lftp commands for those tasks are: get, put, mget, mput, and mirror—it is

not too much to have to remember.

As the name suggests, put is for putting files from your laptop onto the server. By default it

puts files into the server working directory. Here is an example:

If you want to put the file into a different directory on the server (that must already exist)

you can use the -O option:

The command get works in much the same way, but in reverse: you are getting things

from the server to your laptop. For example:

# copy to laptop working directory

get serverFile_1 serverFile1_2

# copy to existing directory laptop_dest_dir

get -O laptop_dest_dir serverFile_1 serverFile1_2Neither of the commands get or put do any of the pathname expansion (or “globbing” as it

we have called it) that you will be familiar with from the bash shell. To effect that sort

of functionality you must use mput and mget, which, as the m prefix in the

command names suggests, are the “multi-file” versions of put and get. Both of these

commands also take the -O option, if desired, so that the above commands could be

rewritten like this:

Finally, there is not a recursive option, like there is with cp, to any of get, put, mget,

or mput. Thus, you cannot use any of those four to put/get entire directories on/from the

server. For that purpose, lftp has reserved the mirror command. It does what it sounds like:

it mirrors a directory from the server to the laptop. The mirror command can actually

be used in a lot of different configurations (between two remote servers, for example) and

with different settings (for example to change only pre-existing files older than

a certain date).

However, here, we will demonstrate only its common use case

of copying directories between a server and laptop here.

To copy a directory dir, and its contents, from your server to your

laptop current directory you use:

To copy a directory ldir from your laptop to your server current directory you

use -R which transmits the directory in the reverse direction:

Learning to use lftp will require a little bit more of your time, but it is worth

it, allowing you to keep a dedicated terminal window open for file transfers with sensible

TAB-completion capability.

7.2.2 git

Most remote servers you work on will have git by default.

If you are doing all your work on a project within a single

repository, you can use git to keep scripts and other files

version-controlled on the server. You can also push and pull files

(not big data or output files!) to GitHub, thus keeping things backed up

and version controlled, and providing a useful way to synchronize scripts

and other files in your project between the server and your laptop.

Example:

- write and test scripts on your laptop in a repo called

my-project - commit scripts on your laptop and push them to GitHub in a repo also

called

my-project - pull

my-projectfrom GitHub to the server. - Try running your scripts in

my-projecton your server. In the process, you may discover that you need to change/fix some things so they will run correctly on the server. Fix them! - Once things are fixed and successfully running on the server, commit those changes and push them to GitHub.

- Update the files on your laptop so that they reflect the changes you

had to make on the server, by pulling

my-projectfrom GitHub to your laptop.

7.2.2.1 Configuring git on the remote server

In order to make this sort of worklow successful, you first need to ensure that you have set up git on your remote server. Doing so involves:

- establishing your name and email that will be used with your git commits made from the server.

- Ensuring that git password caching is set up so you don’t always have to type your GitHub password when you push and pull.

- configuring your git text editor to be something that you know how to use.

It can be useful give yourself a git name on the server that reflects the fact that the changes you are committing were made on the server.

For example, for my own setup on the Summit cluster at Boulder, I might do my git configurations by issuing these commands on the command line on the server:

git config --global user.name "Eric C. Anderson (From Summit)"

git config --global user.email eriq@rams.colostate.edu

git config --global core.editor nanoIn all actuality, I tend to set my editor to be vim or emacs, because those are

more powerful editors and I am familiar with then; however, if you are new to Unix,

then nano is an easy-to-use editor, and one is less likely to get “stuck” inside of it, as can happen in vim.

You should set configurations on your server appropriate to yourself (i.e., with your name and email and preferred text editor). Once these configurations are set, you are ready to start cloning repositories from GitHub and then pushing and pulling them, as well.

To this point, we have always done those actions from within RStudio. On a remote server, however, you will have to do all these actions from the command line. That is OK, it just requires learning a few new things.

The first, and most important, issue to understand is that if you want to push new changes back to a repository that is on your GitHub account, GitHub needs to know that you have privileges to do so. Back in the days when you could make authenticated https connections to GitHub, there were some tricks to this. But, since all your connections to GitHub must now be done with SSH, it has actually gotten a lot easier (but it involves setting up SSH keys, as described in the next section).

7.2.2.2 Using git on the remote server

When on the server, you don’t have the convenient RStudio interface to git, so you have to use git commands on the command line. Fortunately these provide straightforward, command-line analogies to the RStudio GUI git interface you have become familiar with.

Intead of having an RStudio Git panel that shows you files that are new or

have been modified, etc., you use git status in your repo to give

a text report of the same.

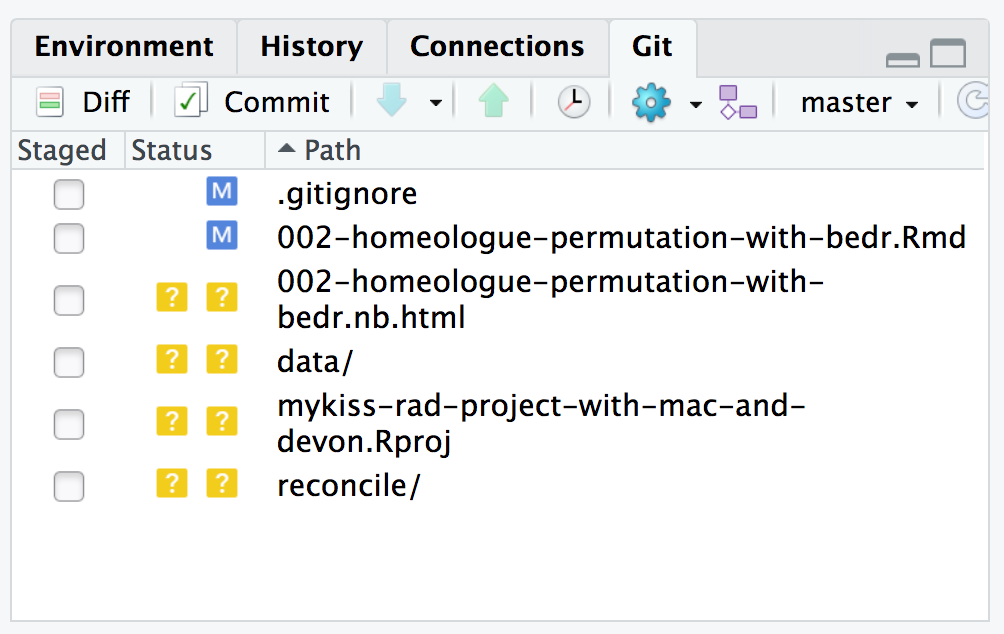

FIGURE 7.5: An example of what an RStudio git window might look like.

That view is merely showing you a graphical view of the output of

the git status command run at the top level of the repository which

looks like this:

% git status

On branch master

Changes not staged for commit:

(use "git add <file>..." to update what will be committed)

(use "git checkout -- <file>..." to discard changes in working directory)

modified: .gitignore

modified: 002-homeologue-permutation-with-bedr.Rmd

Untracked files:

(use "git add <file>..." to include in what will be committed)

002-homeologue-permutation-with-bedr.nb.html

data/

mykiss-rad-project-with-mac-and-devon.Rproj

reconcile/

no changes added to commit (use "git add" and/or "git commit -a")Aha! Be sure to read that and understand that the output tells you which files are tracked by git and Modified (blue M in RStudio) and which are untracked (Yellow ? in RStudio).

If you wanted to see a report of the changes in the files relative

to the currently committed version, you could use git diff, passing

it the file name as an argument. We will see an example of that below…

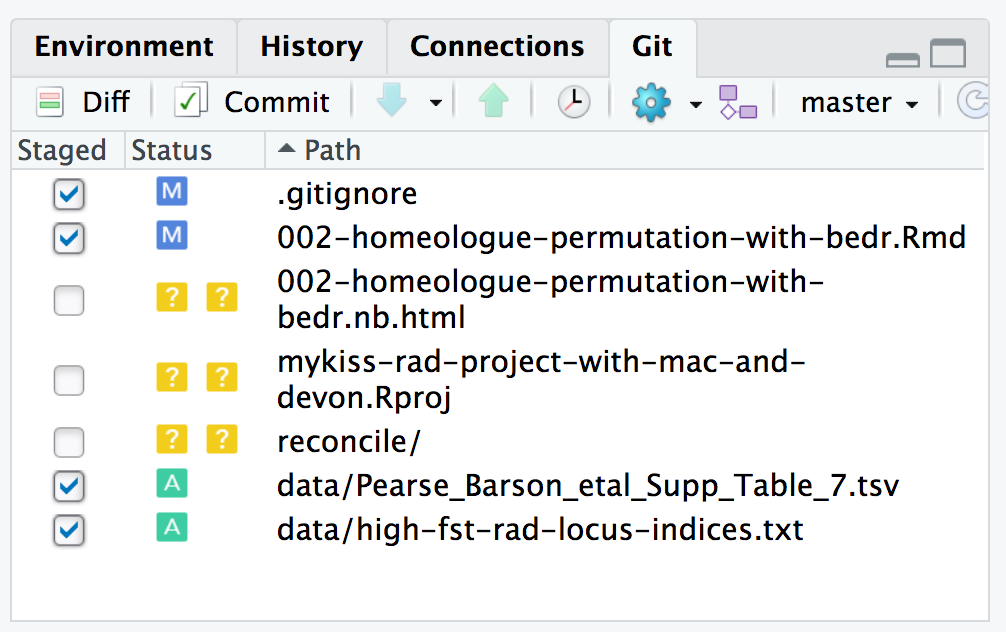

git you first must

stage them. In RStudio you do that by clicking the little button to

the left of the file or directory in the Git window. For example,

if we clicked the buttons for the data/ directory, as well as for

.gitignore and 002-homeologue-permutation-with-bedr.Rmd, we would

have staged them and it would look like Figure 7.6.

FIGURE 7.6: An example of what an RStudio git window might look like.

In order to do the equivalent operations with git on the command line

you would use the git add command, explicitly naming the files you wish to

stage for committing:

Now, if you check git status you will see:

% git status

On branch master

Changes to be committed:

(use "git reset HEAD <file>..." to unstage)

modified: .gitignore

modified: 002-homeologue-permutation-with-bedr.Rmd

new file: data/Pearse_Barson_etal_Supp_Table_7.tsv

new file: data/high-fst-rad-locus-indices.txt

Untracked files:

(use "git add <file>..." to include in what will be committed)

002-homeologue-permutation-with-bedr.nb.html

mykiss-rad-project-with-mac-and-devon.Rproj

reconcile/It tells you which files are ready to be committed!

In order to commit the files to git you do:

And then, to push them back to GitHub (if you cloned this repository from GitHub), you can simply do:

That syntax is telling git to push the master branch (which is

the default branch in a git repository), to the repository labeled as

origin, which will be the GitHub repository if you cloned the repository

from GitHub. (If you are working with a different git branch than master,

you would need to specify its name here. That is not difficult, but is

beyond the scope of this chapter.)

Now, assuming that we cloned the alignment-play repository to our

server, here are the steps involved in editing a file, committing the

changes, and then pushing them back to GitHub. The command in the following

is written as [alignment-play]--% which is telling us that we are in the

alignment-play repository.

# check git status

[alignment-play]--% git status

# On branch master

nothing to commit, working directory clean

# Aha! That says nothing has been modified.

# But, now we edit the file alignment-play.Rmd

[alignment-play]--% nano alignment-play.Rmd

# In this case I merely added a line to the YAML header.

# Now, check status of the files:

[alignment-play]--% git status

# On branch master

# Changes not staged for commit:

# (use "git add <file>..." to update what will be committed)

# (use "git checkout -- <file>..." to discard changes in working directory)

#

# modified: alignment-play.Rmd

#

no changes added to commit (use "git add" and/or "git commit -a")

# We see that the file has been modified.

# Now we can use git diff to see what the changes were

[alignment-play]--% git diff alignment-play.Rmd

diff --git a/alignment-play.Rmd b/alignment-play.Rmd

index 9f75ebb..b389fae 100644

--- a/alignment-play.Rmd

+++ b/alignment-play.Rmd

@@ -3,6 +3,7 @@ title: "Alignment Play!"

output:

html_notebook:

toc: true

+ toc_float: true

---

# The output above is a little hard to parse, but it shows

# the line that has been added: " toc_float: true" with a

# "+" sign.

# In order to commit the changes, we do:

[alignment-play]--% git add alignment-play.Rmd

[alignment-play]--% git commit

# after that, we are bumped into the nano text editor

# to write a short message about the commit. After exiting

# from the editor, it tells us:

[master 001e650] yaml change

1 file changed, 1 insertion(+)

# Now, to send that new commit to GitHub, we use git push origin master

[alignment-play]--% git push origin master

Password for 'https://eriqande@github.com':

Counting objects: 5, done.

Delta compression using up to 24 threads.

Compressing objects: 100% (3/3), done.

Writing objects: 100% (3/3), 325 bytes | 0 bytes/s, done.

Total 3 (delta 2), reused 0 (delta 0)

remote: Resolving deltas: 100% (2/2), completed with 2 local objects.

To https://eriqande@github.com/eriqande/alignment-play

0c1707f..001e650 master -> masterIn order to push to a GitHub repository from your remote server you will

need to establish a public/private SSH key pair, and share the public key

in the settings of your GitHub account. The process for this is similar to

what you have already done for accessing GitHub via git with your laptop:

follow the directions for Linux systems at:

https://happygitwithr.com/ssh-keys.html.

In order to copy your public key to GitHub, it will be easiest to

cat ~/.ssh/id_ed25519.pub to stdout and then copy it from your terminal to

GitHub.

Finally, if after pushing those changes to GitHub, we then pull them

down to our laptop, and make more changes on top of them and push those

back to GitHub, we can retrieve from GitHub to the server those changes we

made on our laptop with git pull origin master. In other words, from the

server we simply issue the command:

7.2.3 Globus

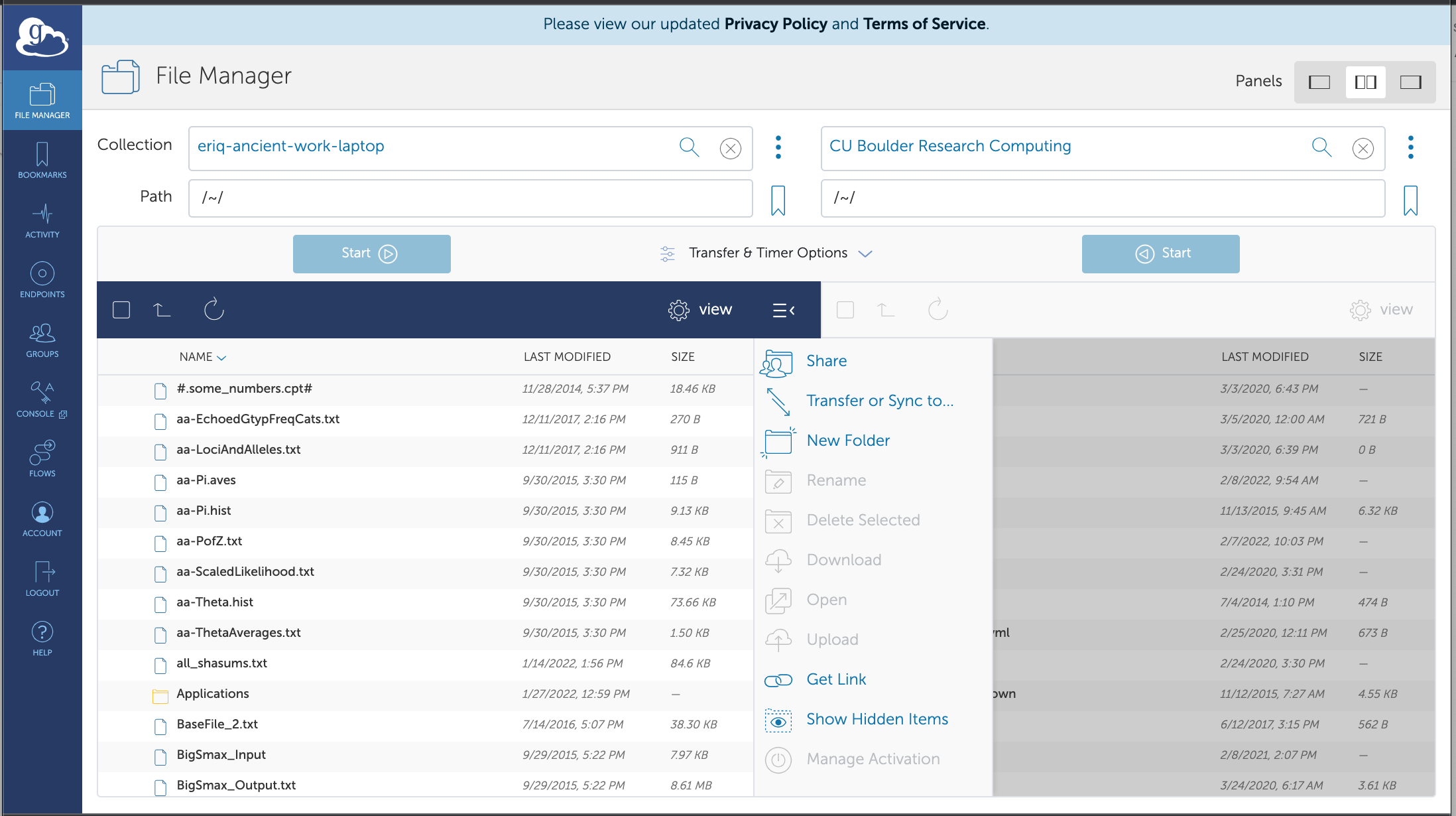

Globus is a file transfer system for high performance computing that was developed long ago by a group at the University of Chicago. If you work at an institution that has a subscription to the Globus system (as is the case with Colorado State University!), then it is quite easy to use it.

In the Globus model, files get transferred between different “endpoints.” Which are typically file servers on large university computing systems. You as a user are entitled to initiate transfers between the endpoints to which you have access rights. You can initiate these transfers using a web interface through your web browser. This makes is incredibly convenient, especially if you want to transfer large files between different computing clusters that are endpoints on the Globus network.

Addtionally, Globus provides a small software application that can turn your own laptop or your desktop workstation into a Globus endpoint, allowing you to initiate data transfers between your laptop/desktop and the cluster. Globus is a well-tested and robust system, so, since it is offered for Colorado State University students and faculty, it is well worth using.

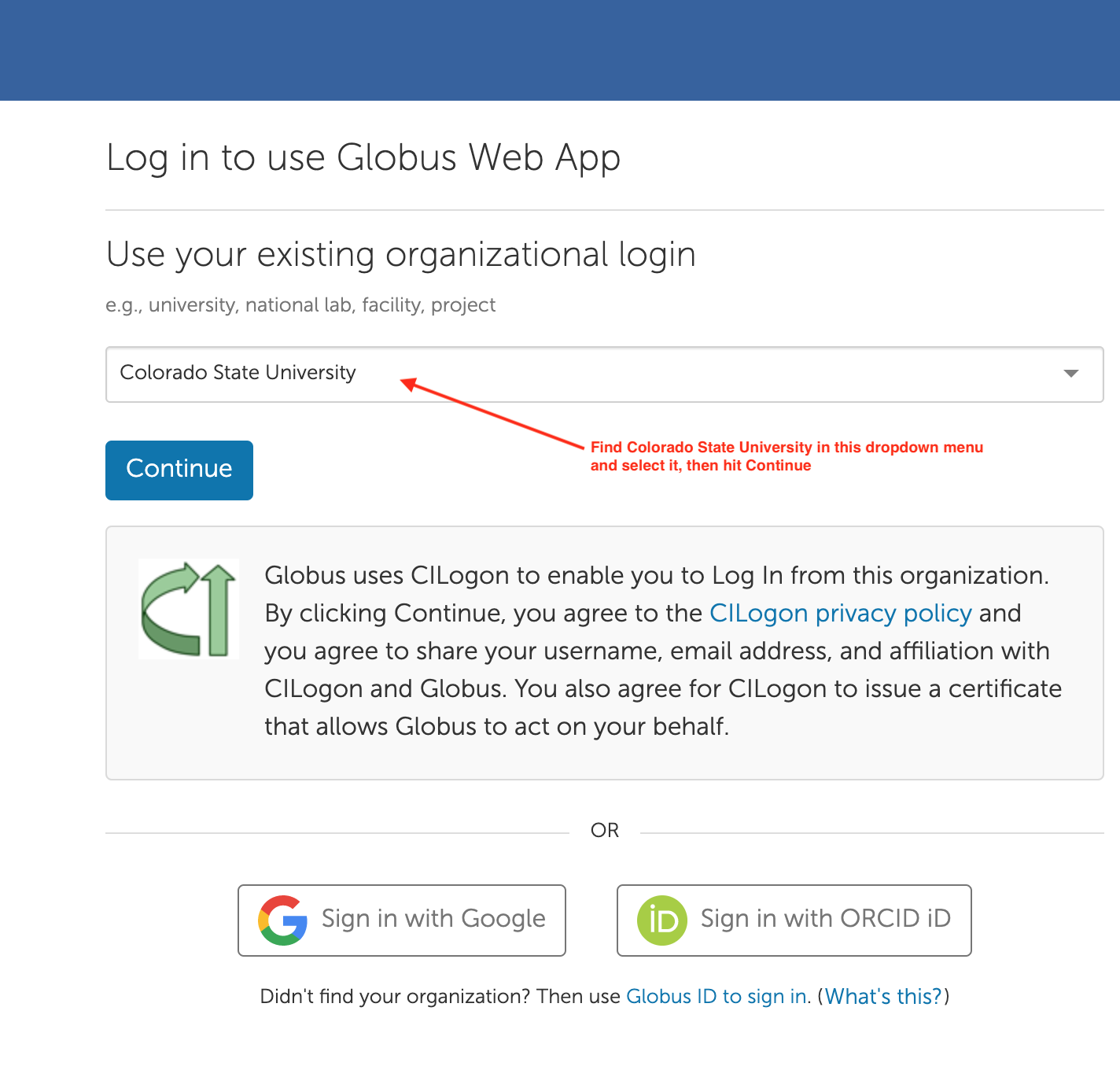

The steps to using it are:

Sign in to Globus as a Colorado State Affiliate by going to https://www.globus.org/app/login, and finding Colorado State University in the dropdown menu, and hitting continue.

When you do that the first time, you might need to agree to using CILogin. Do so.

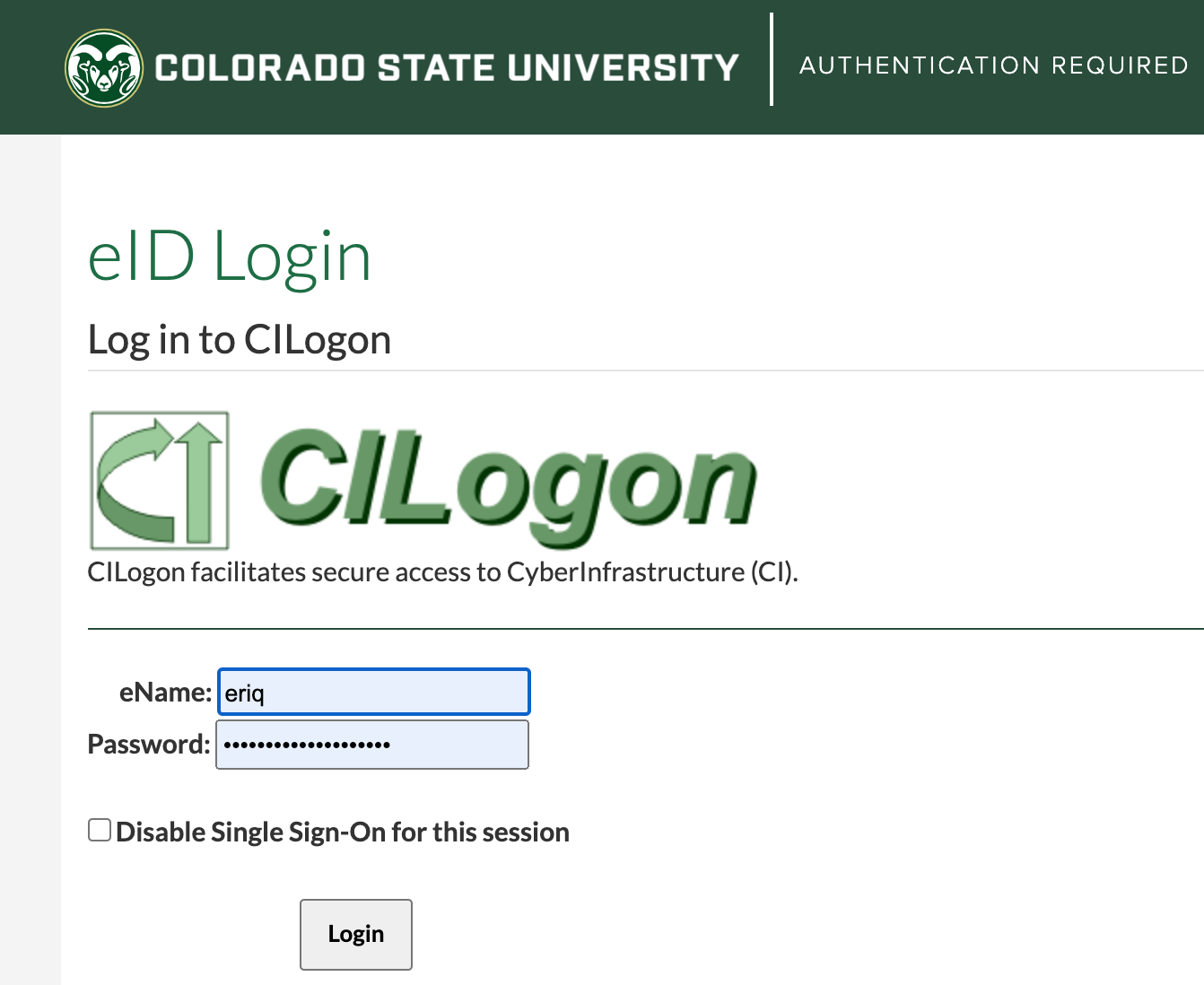

When you do that the first time, you might need to agree to using CILogin. Do so.You are then taken to a page to authenticate with CSU—it is the familiar eID login. Login to it. For me it looks like this:

After authenticating, you might be taken to a page that looks like this:

To be honest, I don’t know what this is about. I think it is Globus pitching its paid

options. Whatever….You don’t need it.

To be honest, I don’t know what this is about. I think it is Globus pitching its paid

options. Whatever….You don’t need it.

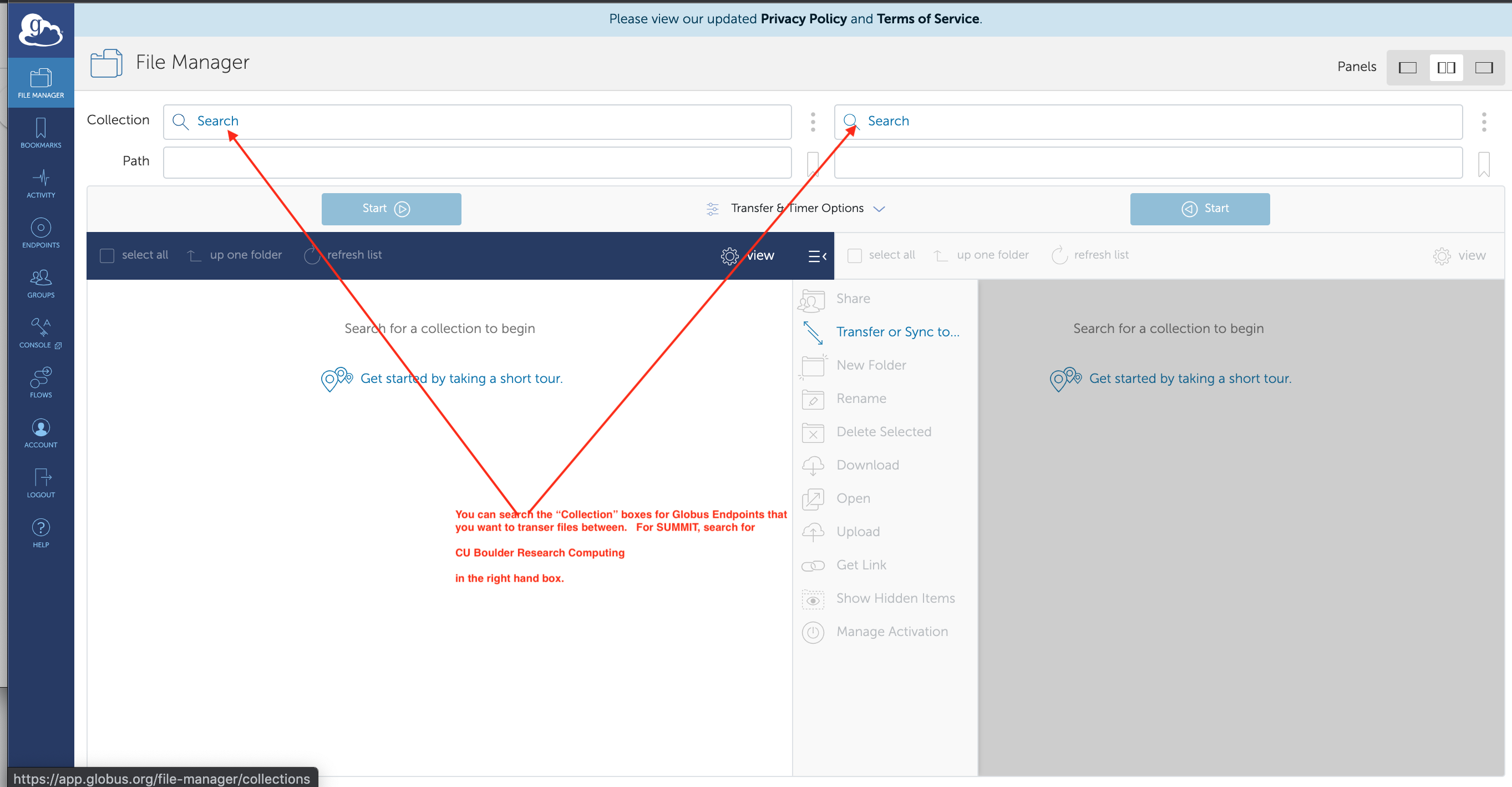

Instead, proceed directly to https://app.globus.org/file-manager which looks like this:

Search for

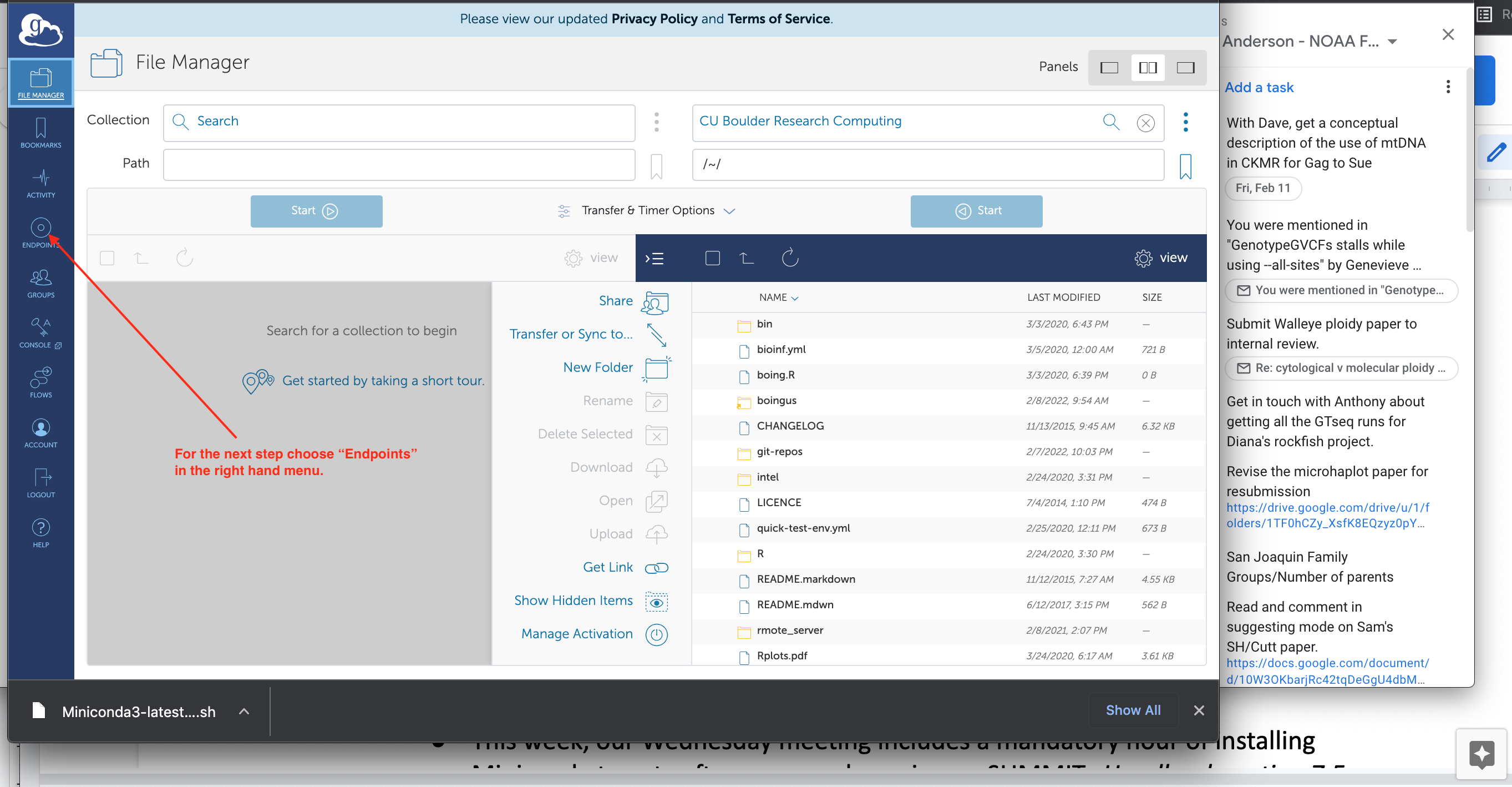

Search for CU Boulder Research Computingin the right hand box. When you find it and select it, you should see your home directory on SUMMIT in it, like this:

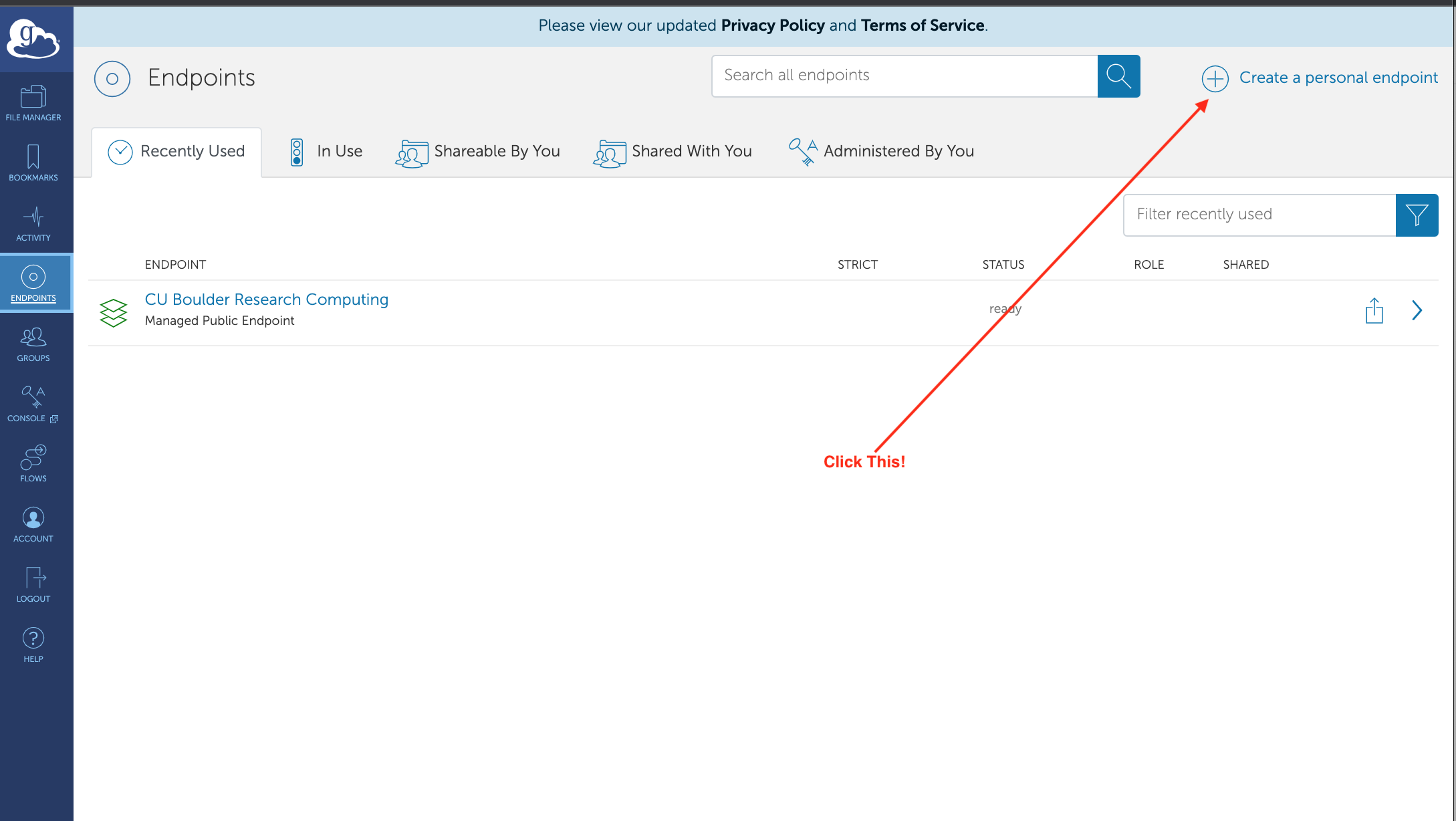

For the next step, you want to create an endpoint on your own laptop. Choose the “Endpoints” in the left menu (see red arrow in picture above). When you do that you can find the “Create a personal endpoint” link:

After clicking that, click the link to download “Globus Personal Connect” for your

operating system.

After clicking that, click the link to download “Globus Personal Connect” for your

operating system.After downloading it, install “Globus Personal Connect”.

After installing it, open “Globus Personal Connect”. If you haven’t used it before, it should ask you to log in:

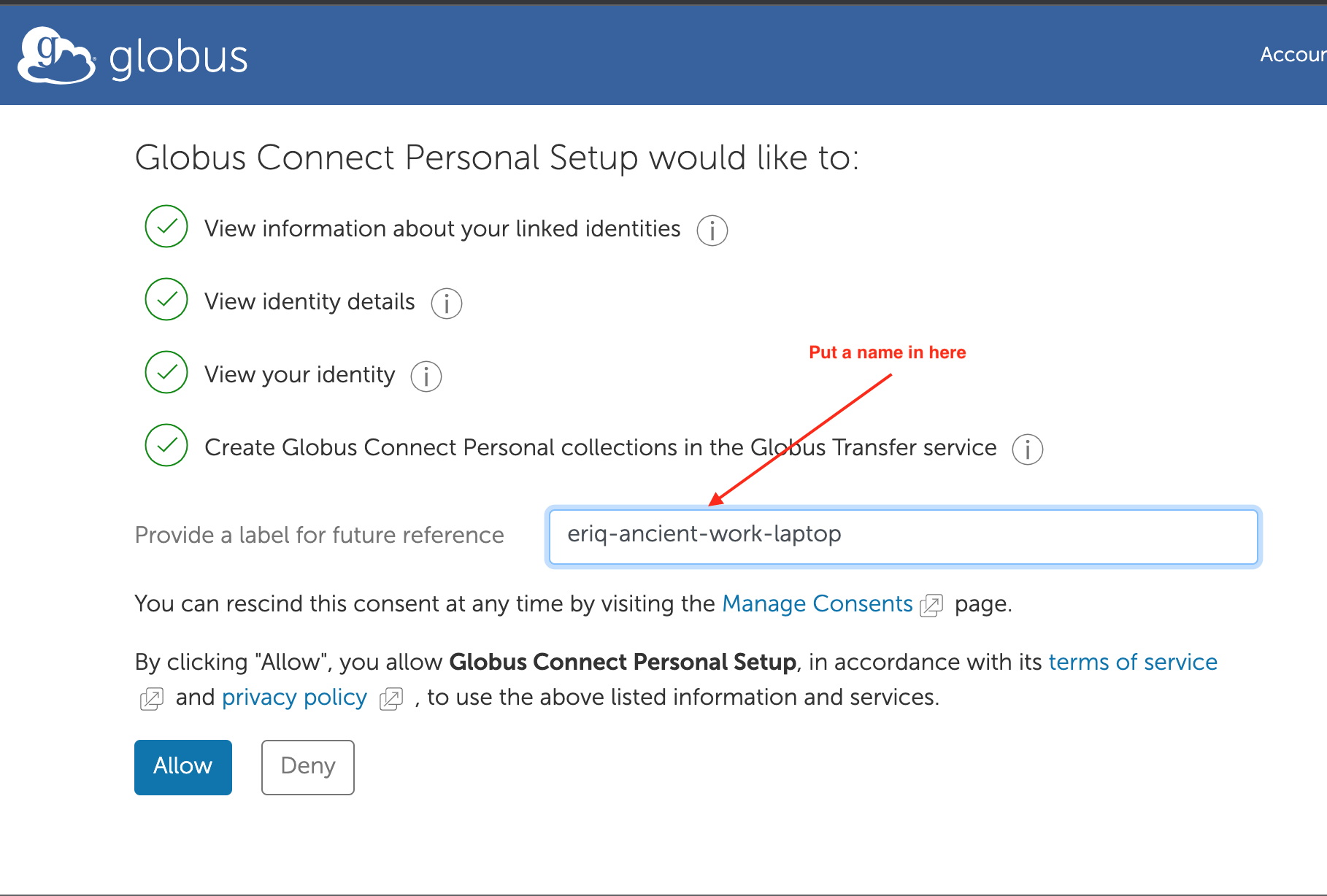

After clicking log-in enter a name by which you would like to call your endpoint, and then choose “Allow”

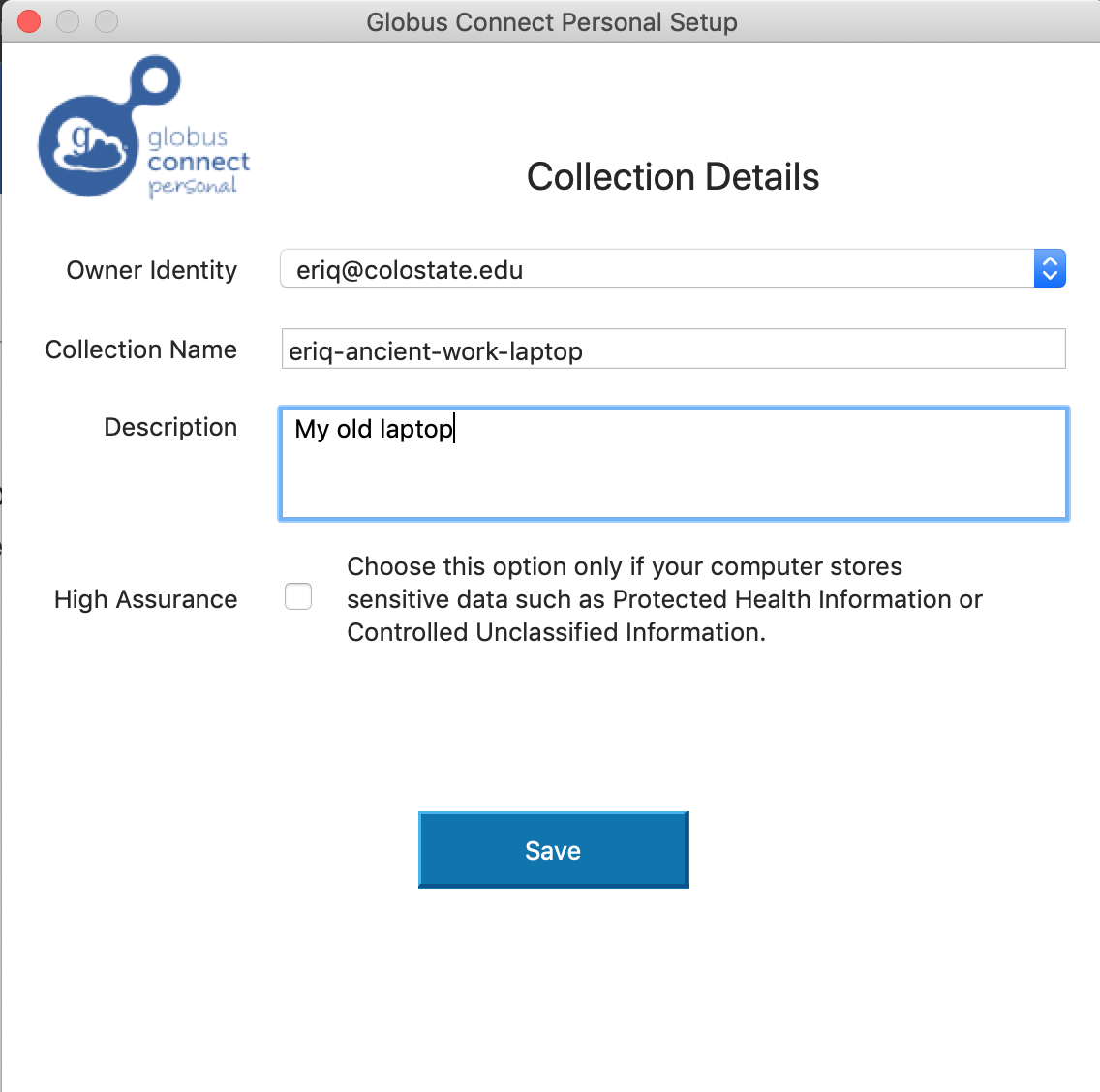

Only one more screen to go. Fill in some more names that are appropriate to your laptop/desktop and choose “Save”. (Don’t put in the names I have used…) You probably do not want to choose the High Assurance option, as that requires an extra round of work for the sys admins…

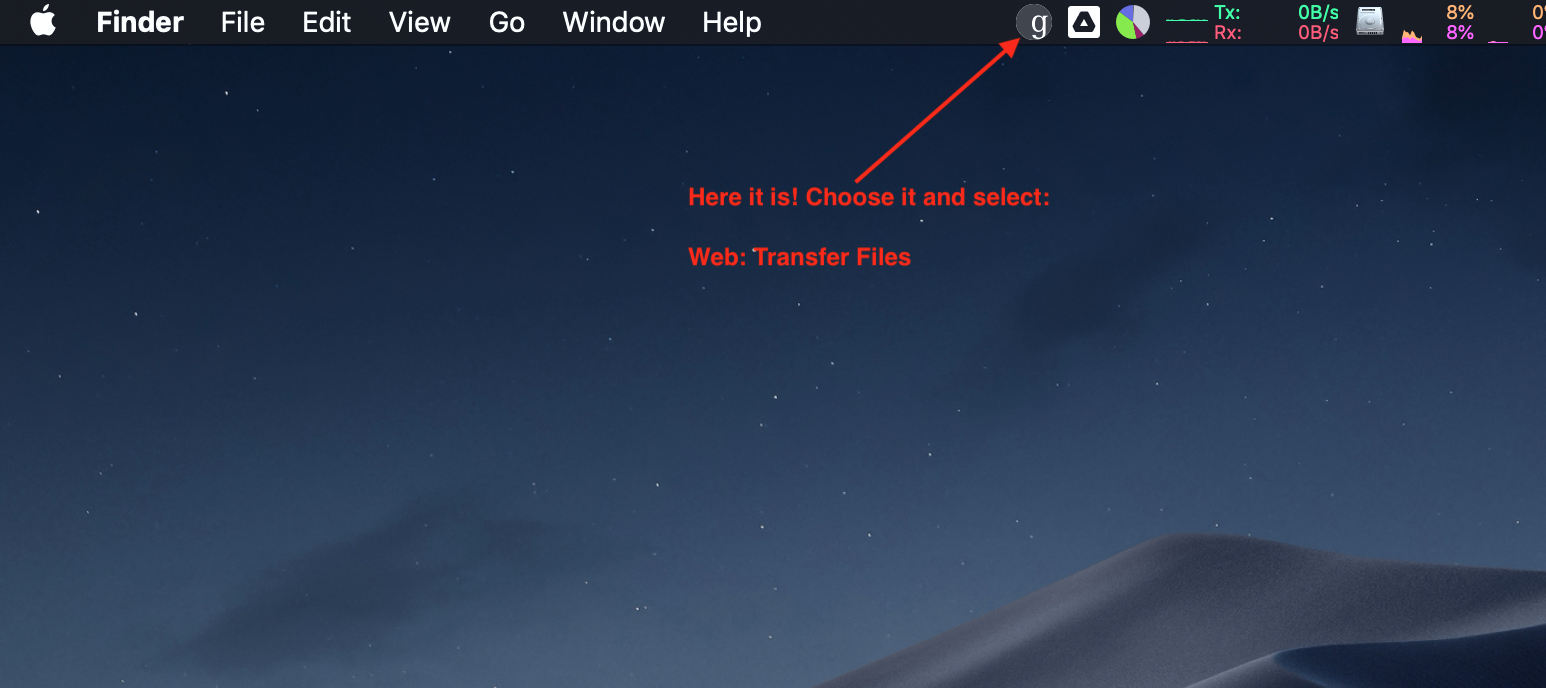

Yay! You are done. Now, on a mac, you can find the Globus icon in the menu bar and use that to start a web transfer session:

And when you get that web page, your laptop will be the left endpoint and you can search for “CU Boulder Research Computing” in the right endpoint box.

Now copying things from one to another is as easy as highlighting files from your desired source endpoint (left or right) and then hitting the “Start” button for that source endpoint.

7.2.4 Interfacing with “The Cloud”

Increasingly, data scientists and tech companies alike are keeping their data “in the cloud.” This means that they pay a large tech firm like Amazon, Dropbox, or Google to store their data for them in a place that can be accessed via the internet. There are many advantages to this model. For one thing, the company that serves the data often will create multiple copies of the data for backup and redundancy: a fire in a single data center is not a calamity because the data are also stored elsewhere, and can often be accessed seamlessly from those other locations with no apparent disruption of service. For another, companies that are in the business of storing and serving data to multiple clients have data centers that are well-networked, so that getting data onto and off of their storage systems can be done very quickly over the internet by an end-user with a good internet connection.

Five years ago, the idea of storing next generation sequencing data in the cloud might have sounded a little crazy—it always seemed a laborious task getting the data off of the remote server at the sequencing center, so why not just keep the data in-house once you have it? To be sure, keeping a copy of your data in-house still can make sense for long-term data archiving needs, but, today, cloud storage for your sequencing data can make a lot of sense. A few reasons are:

- Transferring your data from the cloud to the remote HPC system that you use to process the data can be very fast.

- As above, your data can be redundantly backed up.

- If your institution (university, agency, etc.) has an agreement with a cloud storage service that provides you with unlimited storage and free network access, then storing your sequencing data in the cloud will cost considerably less than buying a dedicated large system of hard drives for data backup. (One must wonder if service agreements might not be at risk of renegotiation if many researchers start using their unlimited institutional cloud storage space to store and/or archive their next generation sequencing data sets. My own agency’s contract with Google runs through 2021…but I have to think that these services are making plenty of money, even if a handful of researchers store big sequence data in the cloud. Nonetheless, you should be careful not to put multiple copies of data sets, or intermediate files that are easily regenerated, up in the cloud.)

- If you are a PI with many lab members wishing to access the same data set, or even if you are just a regular Joe/Joanna researcher but you wish to share your data, it is possible to effect that using your cloud service’s sharing settings. We will discuss how to do this with Google Drive.

There are clearly advantages to using the cloud, but one small hurdle remains. Most

of the time, working in an HPC environment, we are using Unix, which provides a consistent

set of tools for interfacing with other computers using SSH-based protocols (like scp

for copying files from one remote computer to another). Unfortunately, many common

cloud storage services do not offer an SSH based interface. Rather, they typically process

requests from clients using an HTTPS protocol. This protocol, which effectively runs the

world-wide web, is a natural choice for cloud services that most people will access

using a web browser; however, Unix does not traditionally come with a utility or command

to easily process the types of HTTPS transactions needed to network with

cloud storage. Furthermore, there must be some security when it comes to accessing

your cloud-based storage—you don’t want everyone to be able to access your files, so

your cloud service needs to have some way of authenticating people

(you and your labmates for example) that are authorized to access your data.

These problems have been overcome by a utility called rclone, the product of a

comprehensive open-source software project that brings the functionality of the

rsync utility (a common Unix tool used to synchronize and mirror file systems)

to cloud-based storage. (Note: rclone has nothing to do with the R programming

language, despite its name that looks like an R package.)

Currently rclone provides a consistent interface for accessing

files from over 35 different cloud storage providers, including Box, Dropbox, Google Drive,

and Microsoft OneDrive. Binaries for rclone can be downloaded for your desktop

machine from https://rclone.org/downloads/. We will

talk about how to install it on your HPC system later.

Once rclone is installed and in your PATH, you invoke it in your terminal

with the command rclone. Before we get into the details of the various rclone subcommands,

it will be helpful to take a glance at the information rclone records when it

configures itself to talk to your cloud service. To do so, it creates a file called ~/.config/rclone/rclone.conf, where it stores information about all the different

connections to cloud services you have set up. For example, that

file on my system looks like this:

[gdrive-rclone]

type = drive

scope = drive

root_folder_id = 1I2EDV465N5732Tx1FFAiLWOqZRJcAzUd

token = {"access_token":"bs43.94cUFOe6SjjkofZ","token_type":"Bearer","refresh_token":"1/MrtfsRoXhgc","expiry":"2019-04-29T22:51:58.148286-06:00"}

client_id = 2934793-oldk97lhld88dlkh301hd.apps.googleusercontent.com

client_secret = MMq3jdsjdjgKTGH4rNV_y-NbbGIn this configuration:

gdrive-rcloneis the name by which rclone refers to this cloud storage locationroot_folder_idis the ID of the Google Drive folder that can be thought of as the root directory ofgdrive-rclone. This ID is not the simple name of that directory on your Google Drive, rather it is the unique name given by Google Drive to that directory. You can see it by navigating in your browser to the directory you want and finding it after the last slash in the URL. For example, in the above case, the URL is:https://drive.google.com/drive/u/1/folders/1I2EDV465N5732Tx1FFAiLWOqZRJcAzUdclient_idandclient_secretare like a username and a shared secret thatrcloneuses to authenticate the user to Google Drive as who they say they are.tokenare the credentials used byrcloneto make requests of Google Drive on the basis of the user.

Note: the above does not include my real credentials, as then anyone could use them to access my Google Drive!

To set up your own configuration file to use Google Drive, you will use the rclone config

command, but before you do that, you will want to wrangle a client_id from Google. Follow

the directions at https://rclone.org/drive/#making-your-own-client-id. Things are a little different from in their step

by step, but you can muddle through to get to a screen with a client_ID and a client

secret that you can copy onto your clipboard.

Once you have done that, then run rclone config and follow the prompts. A

typical session of rclone config for Google Drive access is given

here. Don’t choose to do the advanced setup; however

do use “auto config,” which will bounce up a web page and let you authenticate rclone

to your Google account.

It is worthwhile first setting up a config file on your laptop, and making sure that it is working. After that, you can copy that config file to other remote servers you work on and immediately have the same functionality.

7.2.4.1 Encrypting your config file

While it is a powerful thing to be able to copy a config file from

one computer to the next and immediately be able to access your Google

Drive account. That might (and should) also make you a little bit

uneasy. It means that if the config file falls into the wrong hands,

whoever has it can gain access to everything on your Google Drive. Clearly

this is not good. Consequently, once you have created your rclone config

file, and well before you transfer it to another computer, you must

encrypt it. This makes sense, and fortunately it is fairly easy: you can

use rclone config and see that encryption is one of

the options. When it is encrypted, use rclone config show to see what

it looks like in clear text.

The downside of using encryption is that you have to enter your password every time you make an rclone command, but it is worth it to have the security.

Here is what it looks like when choosing to encrypt one’s config file:

% rclone config

Current remotes:

Name Type

==== ====

gdrive-rclone drive

e) Edit existing remote

n) New remote

d) Delete remote

r) Rename remote

c) Copy remote

s) Set configuration password

q) Quit config

e/n/d/r/c/s/q> s

Your configuration is not encrypted.

If you add a password, you will protect your login information to cloud services.

a) Add Password

q) Quit to main menu

a/q> a

Enter NEW configuration password:

password:

Confirm NEW configuration password:

password:

Password set

Your configuration is encrypted.

c) Change Password

u) Unencrypt configuration

q) Quit to main menu

c/u/q> q

Current remotes:

Name Type

==== ====

gdrive-rclone drive

e) Edit existing remote

n) New remote

d) Delete remote

r) Rename remote

c) Copy remote

s) Set configuration password

q) Quit config

e/n/d/r/c/s/q> qOnce that file is encrypted, you can copy it to other machines for use.

7.2.4.2 Basic Maneuvers

The syntax for use is:

The “subcommand” part tells rclone what you want to do, like copy or sync, and

the “parameter” part of the above syntax is typically a path

specification to a directory or a file. In using rclone to access the

cloud there is not a root directory, like / in Unix. Instead, each remote

cloud access point is treated as the root directory, and you refer to it

by the name of the configuration followed by a colon. In our example,

gdrive-rclone: is the root, and we don’t need to add a / after it to

start a path with it. Thus gdrive-rclone:this_dir/that_dir is a

valid path for rclone to a location on my Google Drive.

Very often when moving, copying, or syncing files, the parameters consist of:

One very important point is that, unlike the Unix commands cp and mv, rclone

likes to operate on directories, not on multiple named files.

A few key subcommands:

ls,lsd, andlslare likels,ls -dandls -l

copy: copy the contents of a source directory to a destination directory. One super cool thing about this is thatrclonewon’t re-copy files that are already on the destination and which are identical to those in the source directory.

Note that the destination directory will be created if it does not already exist.

- sync: make the contents of the destination directory look just like the

contents of the source directory. WARNING This will delete files in the destination

directory that do not appear in the source directory.

A few key options:

--dry-run: don’t actually copy, sync, or move anything. Just tell me what you would have done.--progress: give me progress information when files are being copied. This will tell you which file is being transferred, the rate at which files are being transferred, and and estimated amount of time for all the files to be transferred.--tpslimit 10: don’t make any more than 10 transactions a second with Google Drive (should always be used when transferring files)---fast-list: combine multiple transactions together. Should always be used with Google Drive, especially when handling lots of files.--drive-shared-with-me: make the “root” directory a directory that shows all of the Google Drive folders that people have shared with you. This is key for accessing folders that have been shared with you.

For example, try something like:

Important Configuration Notes!! Rather than always giving the --progress

option on the command line, or always having to remember to use

--fast-list and --tpslimit 10 (and remember what they should be…),

you can set those options to be invoked “by default” whenever you use

rclone. The developers of rclone have made this possible

by setting environment variables in your ~/.bashrc.

If you have an rclone option called --fast-limit, then the corresponding

environment variable is named RCLONE_FAST_LIMIT—basically, you

start with RCLONE_ then you just

drop the first two dashes of the option name, replace the remaining dashes

with underscores, and turn it all into uppercase to make the

environment variable. So, you should, at a minimum add these

lines to your ~/.bashrc:

7.2.4.3 filtering: Be particular about the files you transfer

rclone works a little differently than the Unix utility cp. In particular,

rclone is not set up very well to copy individual files. While there is a

an rclone command known as copyto that will allow you copy a single file,

you cannot (apparently) specify multiple, individual files that you wish to copy.

In other words, you can’t do:

In general, you will be better off using rclone to copy the contents of a directory

to the inside of the destination directory. However, there are options in rclone that

can keep you from being totally indiscriminate about the files you transfer. In other words,

you can filter the files that get transferred. You can read about that at

https://rclone.org/filtering/.

For a quick example, imagine that you have a directory called Data on you Google Drive

that contains both VCF and BAM files. You want to get only the VCF files (ending with .vcf.gz, say)

onto the current working directory on your cluster. Then something like this works:

Note that, if you are issuing this command on a Unix system in a directory

where the pattern *.vcf.gz will expand (by globbing) to multiple files, you will

get an error. In that case, wrap the pattern in a pair of single quotes to keep

the shell from expanding it, like this:

7.2.4.4 Feel free to make lots of configurations

You might want to configure a remote for each directory-specific project.

You can do that by just editing the configuration file. For example,

if I had a directory deep within my Google Drive, inside a chain of folders that

looked like, say, Projects/NGS/Species/Salmon/Chinook/CentralValley/WinterRun

where I was keeping

all my data on a project concerning winter-run Chinook salmon, then it would be

quite inconvenient to type Projects/NGS/Species/Salmon/Chinook/CentralValley/WinterRun

every time I wanted to copy or sync something within that directory. Instead,

I could add the following

lines to my configuration file, essentially copying the existing configuration and

then modifying the configuration name and the root_folder_id to be the

Google Drive identifier for the folder Projects/NGS/Species/Salmon/Chinook/CentralValley/WinterRun (which

one can find by navigating to that folder in a web browser and pulling the ID from the

end of the URL.) The updated configuration could look like:

[gdrive-winter-run]

type = drive

scope = drive

root_folder_id = 1MjOrclmP1udhxOTvLWDHFBVET1dF6CIn

token = {"access_token":"bs43.94cUFOe6SjjkofZ","token_type":"Bearer","refresh_token":"1/MrtfsRoXhgc","expiry":"2019-04-29T22:51:58.148286-06:00"}

client_id = 2934793-oldk97lhld88dlkh301hd.apps.googleusercontent.com

client_secret = MMq3jdsjdjgKTGH4rNV_y-NbbGAs long as the directory is still within the same Google Drive account, you can re-use

all the authorization information, and just change the [name] part and the root_folder_id.

Now this:

puts items into Projects/NGS/Species/Salmon/Chinook/CentralValley/WinterRun on the Google Drive

without having to type that God-awful long path name.

7.2.4.5 Installing rclone on a remote machine without sudo access

The instructions on the website require root access. You don’t have to have root

access to install rclone locally in your home directory somewhere.

Copy the download link from https://rclone.org/downloads/ for

the type of operating system your remote machine uses (most likely Linux if it is a cluster).

Then transfer that with wget, unzip it and put the binary in your PATH. It will look

something like this:

wget https://downloads.rclone.org/rclone-current-linux-amd64.zip

unzip rclone-current-linux-amd64.zip

cp rclone-current-linux-amd64/rclone ~/binYou won’t get manual pages on your system, but you can always find the docs on the web.

7.2.5 Getting files from a sequencing center

Very often sequencing centers will post all the data from a single run of a machine at a secured (or unsecured) http address. You will need to download those files to operate on them on your cluster or local machine. However some of the files available on the server will likely belong to other researchers and you don’t want to waste time downloading them.

Let’s take an example. Suppose you are sent an email from the sequencing center that says something like:

Your samples are AW_F1 (female) and AW_M1 (male). You should be able to access the data from this link provided by YCGA: http://sysg1.cs.yale.edu:3010/5lnO9bs3zfa8LOhESfsYfq3Dc/061719/

You can easily access this web address using rclone. You could set up a new

remote in your rclone config to point to http://sysg1.cs.yale.edu,

but, since you will only be using this once, to get your data, it makes

more sense to just specify the remote on the command line. This can be

done by passing rclone the URL address via the --http-url option, and

then, after that, telling it what protocol to use by adding :http: to

the command. Here is what you would use to list the directories available

at the sequencing center URL:

# here is the command

% rclone lsd --http-url http://sysg1.cs.yale.edu:3010/5lnO9bs3zfa8LOhESfsYfq3Dc/061719/ :http:

# and here is the output

-1 1969-12-31 16:00:00 -1 sjg73_fqs

-1 1969-12-31 16:00:00 -1 sjg73_supernova_fqsAha! There are two directories that might hold our sequencing data.

I wonder what is in those diretories? The rclone tree command is the

perfect way to drill down into those diretories and look at their contents:

% rclone tree --http-url http://sysg1.cs.yale.edu:3010/5lnO9bs3zfa8LOhESfsYfq3Dc/061719/ :http:

/

├── sjg73_fqs

│ ├── AW_F1

│ │ ├── AW_F1_S2_L001_I1_001.fastq.gz

│ │ ├── AW_F1_S2_L001_R1_001.fastq.gz

│ │ └── AW_F1_S2_L001_R2_001.fastq.gz

│ ├── AW_M1

│ │ ├── AW_M1_S3_L001_I1_001.fastq.gz

│ │ ├── AW_M1_S3_L001_R1_001.fastq.gz

│ │ └── AW_M1_S3_L001_R2_001.fastq.gz

│ └── ESP_A1

│ ├── ESP_A1_S1_L001_I1_001.fastq.gz

│ ├── ESP_A1_S1_L001_R1_001.fastq.gz

│ └── ESP_A1_S1_L001_R2_001.fastq.gz

└── sjg73_supernova_fqs

├── AW_F1

│ ├── AW_F1_S2_L001_I1_001.fastq.gz

│ ├── AW_F1_S2_L001_R1_001.fastq.gz

│ └── AW_F1_S2_L001_R2_001.fastq.gz

├── AW_M1

│ ├── AW_M1_S3_L001_I1_001.fastq.gz

│ ├── AW_M1_S3_L001_R1_001.fastq.gz

│ └── AW_M1_S3_L001_R2_001.fastq.gz

└── ESP_A1

├── ESP_A1_S1_L001_I1_001.fastq.gz

├── ESP_A1_S1_L001_R1_001.fastq.gz

└── ESP_A1_S1_L001_R2_001.fastq.gz

8 directories, 18 filesWhoa! That is pretty cool!. From this output we see that there are

subdirectories named AW_F1 and AW_M1 that hold the files that

we want. And, of course, the ESP_A1 samples must belong to someone

else. It would be great if we could just download the files we wanted,

excluding the ones in the ESP_A1 directories. It turns out that there is!

rclone has an --exclude option to exclude paths that match certain

patterns (see Section 7.2.4.3, above). We can

experiment by giving rclone copy the --dry-run command to see which

files will be transferred. If we don’t do any filtering, we see this

when we try to dry-run copy the directories to our local directory Alewife/fastqs:

% rclone copy --dry-run --http-url http://sysg1.cs.yale.edu:3010/5lnO9bs3zfa8LOhESfsYfq3Dc/061719/ :http: Alewife/fastqs/

2019/07/11 10:33:43 NOTICE: sjg73_fqs/ESP_A1/ESP_A1_S1_L001_I1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:33:43 NOTICE: sjg73_fqs/ESP_A1/ESP_A1_S1_L001_R1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:33:43 NOTICE: sjg73_fqs/ESP_A1/ESP_A1_S1_L001_R2_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:33:43 NOTICE: sjg73_supernova_fqs/AW_M1/AW_M1_S3_L001_I1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:33:43 NOTICE: sjg73_supernova_fqs/AW_M1/AW_M1_S3_L001_R1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:33:43 NOTICE: sjg73_supernova_fqs/AW_M1/AW_M1_S3_L001_R2_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:33:43 NOTICE: sjg73_supernova_fqs/AW_F1/AW_F1_S2_L001_I1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:33:43 NOTICE: sjg73_supernova_fqs/AW_F1/AW_F1_S2_L001_R1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:33:43 NOTICE: sjg73_supernova_fqs/AW_F1/AW_F1_S2_L001_R2_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:33:43 NOTICE: sjg73_supernova_fqs/ESP_A1/ESP_A1_S1_L001_I1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:33:43 NOTICE: sjg73_supernova_fqs/ESP_A1/ESP_A1_S1_L001_R1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:33:43 NOTICE: sjg73_supernova_fqs/ESP_A1/ESP_A1_S1_L001_R2_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:33:43 NOTICE: sjg73_fqs/AW_F1/AW_F1_S2_L001_I1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:33:43 NOTICE: sjg73_fqs/AW_F1/AW_F1_S2_L001_R1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:33:43 NOTICE: sjg73_fqs/AW_F1/AW_F1_S2_L001_R2_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:33:43 NOTICE: sjg73_fqs/AW_M1/AW_M1_S3_L001_I1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:33:43 NOTICE: sjg73_fqs/AW_M1/AW_M1_S3_L001_R1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:33:43 NOTICE: sjg73_fqs/AW_M1/AW_M1_S3_L001_R2_001.fastq.gz: Not copying as --dry-runSince we do not want to copy the ESP_A1 files we see if we can exclude

them:

% rclone copy --exclude */ESP_A1/* --dry-run --http-url http://sysg1.cs.yale.edu:3010/5lnO9bs3zfa8LOhESfsYfq3Dc/061719/ :http: Alewife/fastqs/

2019/07/11 10:37:22 NOTICE: sjg73_fqs/AW_F1/AW_F1_S2_L001_I1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:37:22 NOTICE: sjg73_fqs/AW_F1/AW_F1_S2_L001_R2_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:37:22 NOTICE: sjg73_fqs/AW_F1/AW_F1_S2_L001_R1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:37:22 NOTICE: sjg73_fqs/AW_M1/AW_M1_S3_L001_I1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:37:22 NOTICE: sjg73_fqs/AW_M1/AW_M1_S3_L001_R2_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:37:22 NOTICE: sjg73_fqs/AW_M1/AW_M1_S3_L001_R1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:37:22 NOTICE: sjg73_supernova_fqs/AW_F1/AW_F1_S2_L001_I1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:37:22 NOTICE: sjg73_supernova_fqs/AW_F1/AW_F1_S2_L001_R2_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:37:22 NOTICE: sjg73_supernova_fqs/AW_F1/AW_F1_S2_L001_R1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:37:22 NOTICE: sjg73_supernova_fqs/AW_M1/AW_M1_S3_L001_I1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:37:22 NOTICE: sjg73_supernova_fqs/AW_M1/AW_M1_S3_L001_R1_001.fastq.gz: Not copying as --dry-run

2019/07/11 10:37:22 NOTICE: sjg73_supernova_fqs/AW_M1/AW_M1_S3_L001_R2_001.fastq.gz: Not copying as --dry-runBooyah! That gets us just what we want. So, then we remove the --dry-run option,

and maybe add -v -P to give us verbose output and progress information, and copy all of our files:

7.3 Editing Files on a Remote Server using a Local Text Editor

Section 7.7 discusses the value of getting good at using a text-based

text editor like vim or emacs, or even the easy-to-use nano. That is

all well and good; however, if you have become proficient with a local

(i.e., running on your laptop) text editor with powerful features and

outstanding syntax highlighting, like SublimeText, which

is available for Mac, Windows, and Linux, then it can be very nice to

be able to directly edit files on your remote server or cluster using your

laptop’s own installation of SublimeText.

It turns out that this is possible through the miracle of SSH port forwarding. Briefly, it works like this:

- When you log into your server with

sshyou tell the server to connect a remote port on the server to a local port on your laptop. This way, the server can send additional data streams back and forth through those connected ports to you. - Then on the server, you run a shell script called

rmatethat will can open a file on the server and send its contents out through the remote port. Your laptop picks up these contents on the local port and can send those contents to SublimeText using a SublimeText plugin called RemoteSubl. - Editing the contents of that file

with SublimeText feels just like editing a local file on your laptop, but when you

save your edits, SublimeText sends the changes back out through the local port to the

remote port on your server, where

rmateapplies the changes to the file on the server.

It is a great system for editing things on the remote server if you are familiar with SublimeText (which you should get familiar with, because it is a great editor!).

More detailed, step-by-step instructions on how to set this up follow.

7.3.1 Step 1: Set up your SSH config file to automatically apply port forwarding to your connections to your server

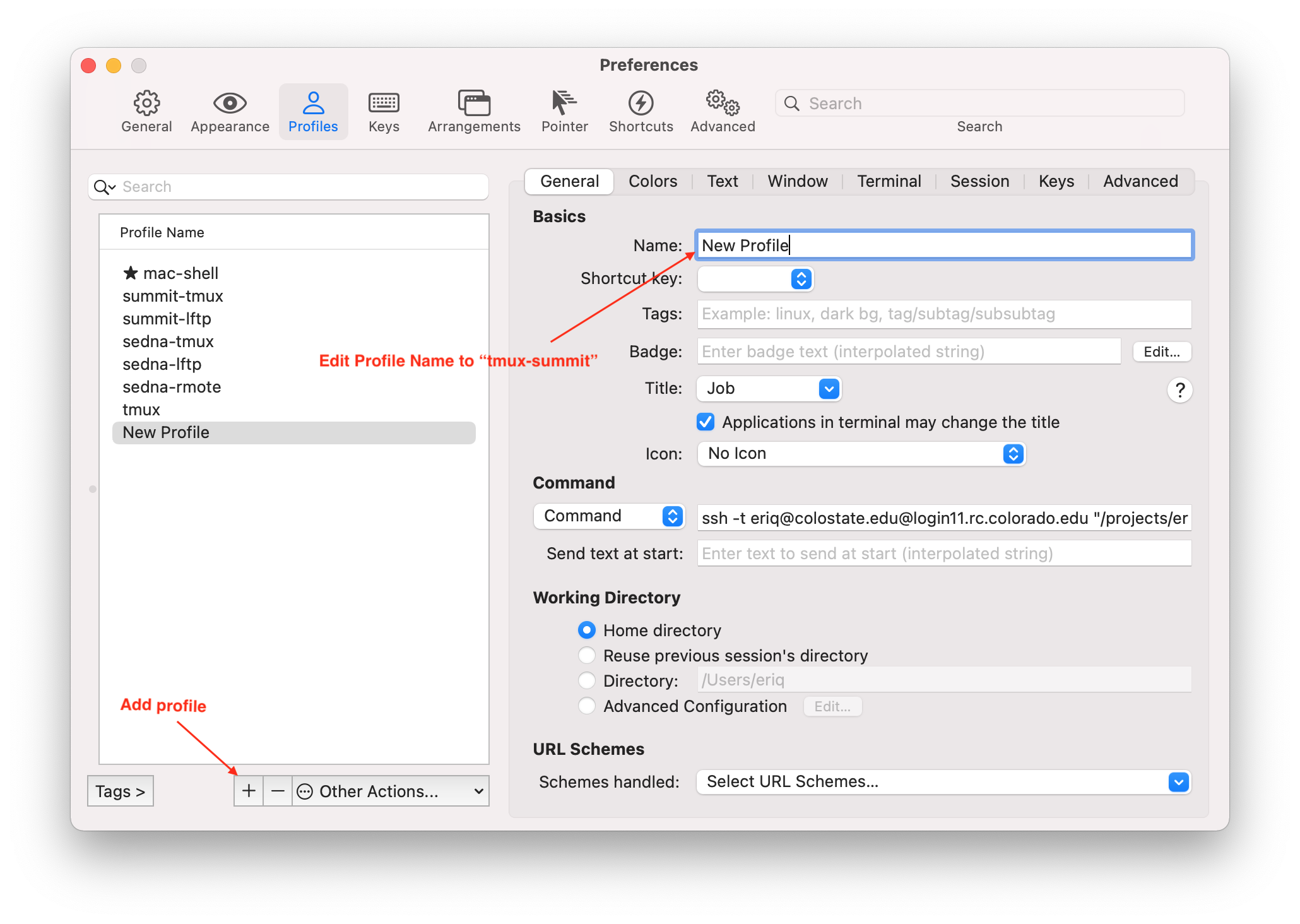

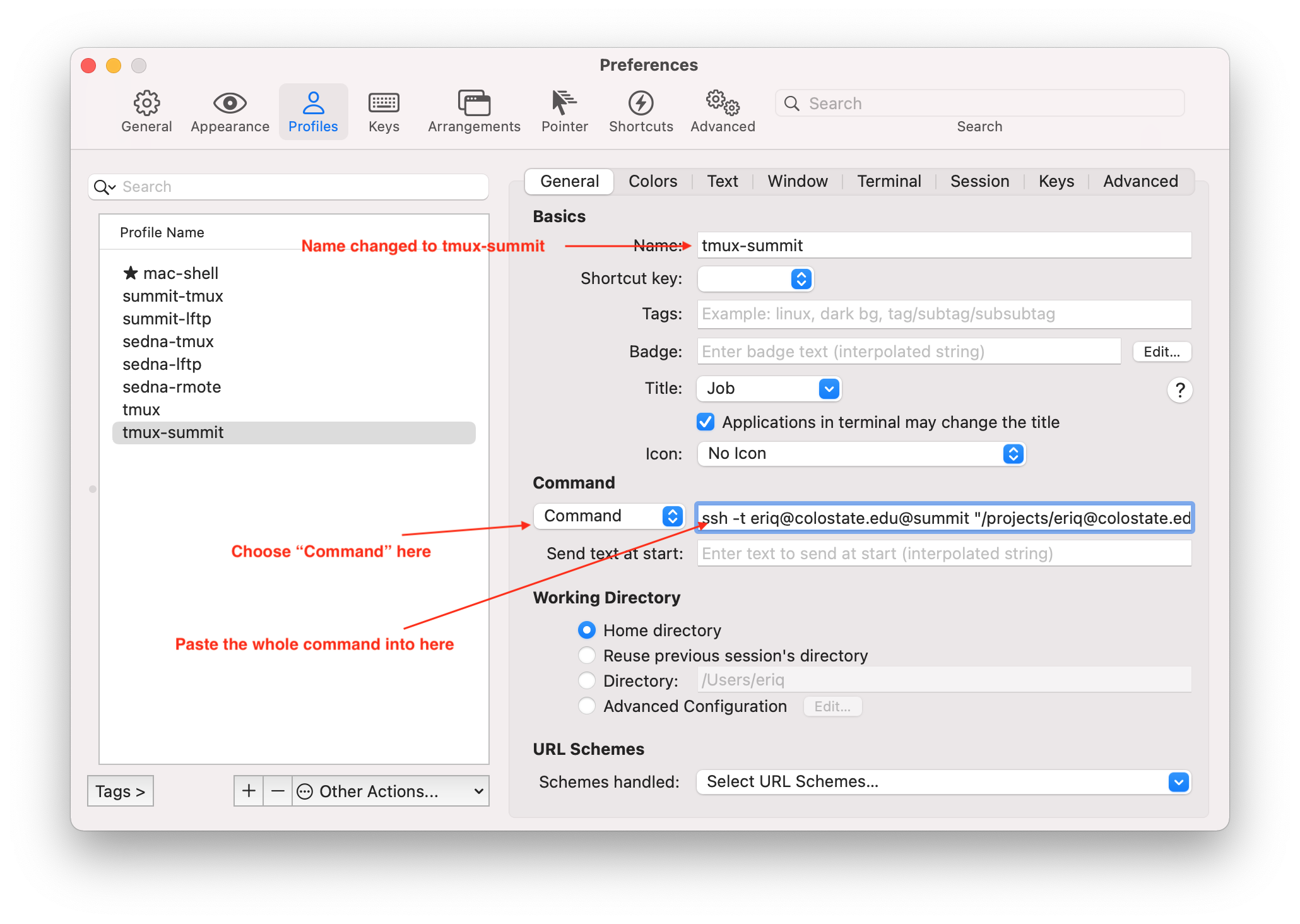

The first thing we will do is something that can especially useful if you have several servers you connect to, and you would like to have shorter names for accessing them: set up an “alias” to them in your SSH config file. Here we show how to set up such an alias to the SUMMIT cluster at Boulder.

To do so, edit your ~/.ssh/config file by adding the following lines to it:

Host summit

HostName login11.rc.colorado.edu

RemoteForward 52XXX localhost:52698

But change the XXX in 52XXX to three digits of your choice. This is important

so you aren’t trying to access the same port as another user at the same time. For

example, use the digits of your birthday, like 52122 if you were born on January 22, etc.

This step connects port number 52XXX on the server to the local port 52698 on your laptop.

Note that the login node you choose could be login12.rc.colorado.edu rather

than login11.rc.colorado.edu, or even some other number if you routinely login

to a specific login node (for example to use tmux…see Section 7.4).

Once you have done that, you should logout of SUMMIT, and then, when you log back in in the future you can use

instead of, for example,

and, when it logs you in, it will enable the port forwarding.

7.3.2 Download SublimeText and add the RemoteSubl package to it

If you haven’t already downloaded SublimeText, you should do that and you should experiment with using it. It is an outstanding text editor. You can try it for free for a long time (indefinitely, it seems), but if you find you like it, then officially buying a license for it is is a good idea.

Once you have it installed, you must install the RemoteSubl plugin. This is done

with SublimeText’s package control system. The steps to do this are:

Hit Shift-Command-P on a mac. On windows I think it is Shift-Windows-P. When you do this, sublime text will open a little text window on your screen. Type into that window:

Package Control: Install Package. You don’t have to type very much of that phrase before you see the whole phrase as a possible completion below where you are typing. When you see the full phrase, use the arrow keys to select that phrase and hit Return. If you don’t seePackage Control: Install Packageyou might seeInstall Package Control. Select that and install Package Control. After that,Package Control: Install Packageshould show up.This should give you another text box. Start typing

RemoteSublinto that window, until you see it in the possible completions. Select it from the completions (with your arrow keys, for example) and then hit return.

7.3.3 Download the rmate shell script to your server and put it on your PATH

On the server, we need the rmate command to send the contents of a file

you wish to edit to the remote port that will get forwarded to the local port

on your laptop. This command is a shell script that you can download with

wget.

As we talked about in an earlier section, you should have a directory,

~/bin that is in your PATH, because you should have a line in your

~/.bashrc file like:

If this is the case, then simply cd-ing to ~/bin on SUMMIT and running the commands:

should get you rmate and make it executable.

7.3.4 Using rmate

If everything has gone according to plan, then, if you have logged into summit

using the summit alias (i.e. using ssh username@summit), then you should

be able to edit any file on your server with:

where path/to/file represents the path to whatever file you want to

edit, and 52XXX is actually the number of the remote port you are forwarding

from, as set up in your ~/.ssh/config file. For example, in keeping with

the example above, this would be:

to edit the .bashrc file on your remote server, or,

etc.

Note that, by default, rmate will open a new tab in the currently active

SublimeText window. If you want to open the file in its own window, you

can use:

The -n option forces the opening of a new SublimeText window.

If you want to edit multiple files at once, for example, all the

files in a scripts folder on your remote machine, you can do like this:

If you have a lot of files in that folder, then keeping track of them

in SublimeText can be made a lot easier if you choose

View->Side Bar->Show Open Files from the SublimeText menu options.

This will show the names of all the open files in a side bar to the left.

Because it can be a hassle to remember your remote port number and type

it each time you use rmate, you can set up an alias in the

~/.bashrc file on your server by adding the line:

Then, on the command line, you can simply use

and open remote files on your local SublimeText.

When you have edited the file, save the change in SublimeText and then close the window. It is that easy.

Note that if you lose connection to the server (for example you close your laptop and it goes to sleep), then you will get a message telling you that SublimeText is no longer connected to any files on the server, and you will have to reconnect them if you want to edit them.

7.4 tmux: the terminal multiplexer

Many universities have recently implemented a two-factor

authentication requirement for access to their computing resources

(like remote servers and clusters). This means that every time

you login to a server on campus (using ssh for example) you must

type your password, and also fiddle with your phone. Such systems

preclude the use of public/private key pairs that historically allowed

you to access a server from a trusted client (i.e., your own secured

laptop) without having to type in a password. As a consequence, today,

opening multiple sessions on a server using ssh and two-factor

authentication requires a ridiculous amount of additional typing and

phone-fiddling, and is a huge hassle. But, when working on a remote

server it is often very convenient to have multiple separate shells that

you are working on and can quickly switch between.

At the same time. When you are working on the shell of a remote machine

and your network connection goes down, then, typically the bash session on

your remote machine will be forcibly quit, killing any jobs that you might

have been in the middle of (however, this is not the case if you submitted

those jobs through a job scheduler like SLURM. Much more on that in the

next chapter.). And, finally, in a traditional

ssh session to a remote machine, when you close your laptop, or put it

to sleep, or quit the Terminal application, all of your active bash sessions

on the remote machine will get shut down. Consequently, the next time

you want to work on that project, after you have logged

onto that remote machine you will have to go through the laborious steps

of navigating to your desired working directory, starting up any processes

that might have gotten killed, and generally getting yourself

set up to work again. That is a serious buzz kill!

Fortunately, there is an awesome utility called tmux, which is short for

“terminal multiplexer” that solves most of the problems we just described.

tmux is similar in function to a utility called screen, but it is easier

to use while at the same time being more customizable and configurable

(in my opinion). tmux is basically your ticket to working way more

efficiently on remote computers, while at the same time looking

(to friends and colleagues, at least) like

the full-on, bad-ass Unix user.

In full confession, I didn’t actually start using tmux until some

five years after a speaker at a workshop delivered an incredibly

enthusiastic presentation about tmux and how much he was in love

with it. In somewhat the same fashion that I didn’t adopt RStudio shortly

after its release, because I had my own R workflows that I had hacked

together myself, I thought to myself: “I have public/private key pairs

so it is super easy for me to just start another terminal window and login

to the server for a new session. Why would I need tmux?” I also didn’t

quite understand how tmux worked initially: I thought that I had to

run tmux simultaneously on my laptop and on the server, and that those

two processes would talk to one another. That is not the case! You

just have to run tmux on the server and all will work fine!

The upshot of that confession is that you should not be a bozo like me,

and you should learn to use tmux right now! You will thank yourself

for it many times over down the road.

7.4.1 An analogy for how tmux works

Imagine that the first time you log in to your remote server you also have the option of speaking on the phone to a super efficient IT guy who has a desk in the server room. This dude never takes a break, but sits at his desk 24/7. He probably has mustard stains on his dingy white T-shirt from eating ham sandwiches non-stop while he works super hard. This guy is Tmux.

When you first speak to this guy after logging in, you have to preface your

commands with tmux (as in, “Hey Tmux!”). He is there to help you

manage different terminal windows with different bash shells or

processes going on in them. In fact, you can think of it this way: you can

ask him to set up

a terminal (i.e., like a monitor), right there on his desk, and then create

a bunch of windows on that terminal for you—each

one with its own bash shell—without having to do a separate login for

each one. He has created all those windows, but you still get to use them.

It is like he has a miracle-mirroring device that lets you operate

the windows that are on the terminal he set up for you on his desk.

When you are done working on all those windows, you can tell Tmux that you want to detach from the special terminal he set up for you at the server. In response he says, “Cool!” and shuts down his miracle-mirroring device, so you no longer see those different windows. However, he does not shut down the terminal on his desk that he set up for you. That terminal stays on, and any of your processes happening on it keep chugging away…even after you logout from the server entirely, throw the lid down on your laptop, have drinks with your friends at Social, downtown, watch an episode of Parks and Rec, and then get a good night’s sleep.

All through the night, Tmux is munching ham sandwiches and keeping an eye on that terminal he set up for you. When you log back onto the server in the morning, you can say “Hey Tmux! I want to attach back to that terminal you set up for me.” He says, “No problem!”, turns his miracle-mirroring device back on, and in an instant you have all of the windows on that terminal back on your laptop with all the processes still running in them—in all the same working directories—just as you left it all (except that if you were running jobs in those windows, some of those jobs might already be done!).

Not only that, but, further, if you are working on the server when a local thunderstorm fries the motherboard on your laptop, you can get a new laptop, log back into the server and ask Tmux to reconnect you to that terminal and get back to all of those windows and jobs, etc. as if you didn’t get zapped. The same goes for the case of a backhoe operator accidentally digging up the fiber optic cable in your yard. Your network connection can go down completely. But, when you get it up and running again, you can say “Hey Tmux! Hook me up!” and he’ll say, “No problem!” and reconnect you to all those windows you had open on the server.

Finally, when you are done with all the windows and jobs on the terminal that Tmux set up for you, you can ask him to kill it, and he will shut it down, unplug it, and, straight out of Office Space, chuck it out the window. But he will gladly install a new one if you want to start another session with him.

That dude is super helpful!

7.4.2 First steps with tmux

The first thing you want to do to make sure Tmux is ready to help you is to simply type:

This should return something like:

/usr/bin/tmuxIf, instead, you get a response like tmux: Command not found. then tmux

is apparently not installed

on your remote server, so you

will have to install it yourself, or beg your sysadmin to do so (we will cover

that in a later chapter). If you

are working on the Summit supercomptuer in Colorado or on Hummingbird at

UCSC, then tmux is installed already. (As of Feb 16, 2020, tmux was

not installed on the Sedna cluster at the NWFSC, but I will request that it

be installed.)

In the analogy, above, we talked about Tmux setting up a terminal

in the server room. In tmux parlance, such a “terminal” is called

a session. In order to be able to tell Tmux that you want to reconnect

to a session, you

will always want to name your sessions so you will request a new

session with this syntax:

You can think of the -s as being short for “session.” So it is basically a short

way of saying, “Hey Tmux, give me a new session named froggies.”

That creates a new session called froggies, and you can imagine we’ve

named it that because we will use it for work on a frog genomics project.

The effect of this is like Tmux firing up a new terminal in his server room, making a window on it for you, starting a new bash shell in that window, and then giving you control of this new terminal. In other words, it is sort of like he has opened a new shell window on a terminal for you, and is letting you see and use it on your computer at the same time.

One very cool thing about this is that you just got a new bash shell without having to login with your password and two-factor authentication again. That much is cool in itself, but is only the beginning.

The new window that you get looks a little different. For one thing, it has a section, one line tall, that is green (by default) on the bottom. In our case, on the left side it gives the name of the session (in square brackets) and then the name of the current window within that session. On the right side you see the hostname (the name of the remote computer you are working on) in quotes, followed by the date and time. The contents in that green band will look something like:

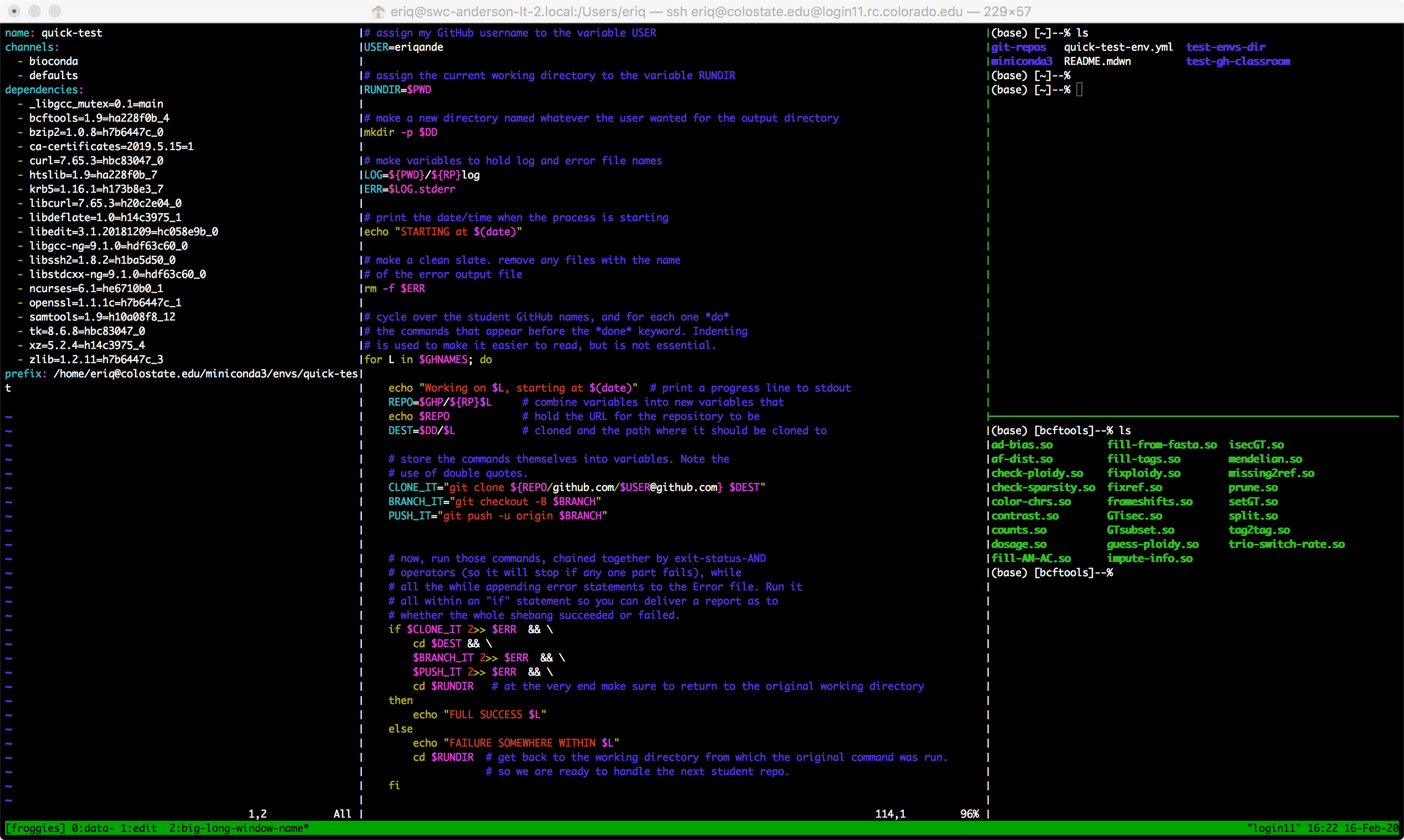

[froggies] 0:bash* "login11" 20:02 15-Feb-20tmux can spawn for you.login11). Many clusters have multiple login

or head nodes, as they are called. The next time you login to the cluster, you

might be assigned to a different login node which will have no idea about your

tmux sessions. If that were the case in this example I would have to use slogin login11 and

authenticate again to get logged into login11 to reconnect to my

tmux session, froggies. Or, if you were a CSU student and wanted to login specifically to

the login11 node on Summit the next time you logged on you could do

ssh username@colostate.edu@login11.rc.colorado.edu. Note the specific login11 in that

statement.

Now, imagine that we want to use this window in our froggies session, to look at some

frog data we have. Accordingly, we might navigate to the directory where those data live

and look at the data with head and less, etc. That is all great, until we realize that

we also want to edit some scripts that we wrote for processing our froggy data. These scripts

might be in a directory far removed from the data directory we are currently in, and we don’t really

want to keep navigating back and forth between those two directories within a single bash shell.

Clearly, we would like to have two windows that we could switch between: one for inspecting

our data, and the other for editing our scripts.

We are in luck! We can do this with tmux. However, now that we are safely working in a session

that tmux started for us, we no longer have to shout “Hey Tmux!” Rather we can just “ring a little

bell” to get his attention. In the default tmux configuration, you do that by pressing

<cntrl>-b from anywhere within a tmux window. This is easy to remember because it is like

a “b” for the “bell” that we ring to get our faithful servant’s attention. <cntrl>-b is known

as the “prefix” sequence that starts all requests to tmux from within a session.

The first thing that we are going to do is ask tmux to let us assign a more descriptive,

name—data to be specific—to the current window. We do this with

<cntrl>-b ,(That’s right! It’s a control-b and then a comma. tmux likes to get by on a minimum number

of keystrokes.) When you do that, the green band at the bottom of the window changes color

and tells you that you can rename the current window. We simply use our keyboard to

change the name to “data”. That was super easy!

Now, to make a new window with a new bash shell that we can use for writing scripts

we do <cntrl>-b c. Try it! That gives you a new window within the froggies session

and switches your focus to it. It is as if Tmux (in his mustard-stained shirt) has created

a new window on the froggies terminal, brought it to the front, and shared it with you.

The left side of the green tmux status bar at the bottom of the screen now says:

[froggies] 0:data- 1:bash*Holy Moly! This is telling you that the froggies session has two windows in it: the first

numbered 0 and named data, and the second numbered 1 and named bash. The - at the end

of 0:data- is telling you that data is the window you were previously focused on, but that

now you are currently focused on the window with the * after its name: 1:bash*.

So, the name bash is not as informative as it could be. Since we will be using this

new window for editing scripts, let’s rename it to edit. You can do that with

<cntrl>-b ,. Do it!

OK! Now, if you have been paying attention, you probably realize that tmux has given us

two windows (with two different bash shells) in this session called froggies. Not only that

but it has associated a single-digit number with each window. If you are all about keyboard

shortcuts, then you probably have already imagined that tmux will let you switch between

these two windows with <cntrl>-b plus a digit (0 or 1 in this case). Play with that.

Do <cntrl>-b 0 and <cntrl>-b 1 and feel the power!

Now, for fun, imagine that we want to have another window and a bash shell for launching

jobs. Make a new window, name it launch, and then switch between those three windows.

Finally. When you are done with all that, you tell Tmux to detach from this session by typing:

<cntrl>-b d(The d is for “detach”). This should kick you back to the shell from which you

first shouted “Hey Tmux!” by issuing the tmux a -t froggies command. So, you

can’t see the windows of your froggies session any longer, but do not despair!

Those windows are still on the monitor Tmux set up for you, casting an eerie glow

on his mustard stained shirt.

If you want to get back in the driver’s seat with all of those windows, you simply need to

tell Tmux that you want to be attached again via his miracle-mirroring device. Since we

are no longer in a tmux window, we don’t use our <cntrl-b> bell to get Tmux’s attention.

We have to shout:

The -t flag stands for “target.” The froggies session is the target of our

attach request. Note that if you don’t like typing that much, you can shorten this to:

Of course, sometimes, when you log back onto the server, you won’t remember the name

of the tmux session(s) you started. Use this command to list them all:

The ls here stands for “list-sessions.” This can be particularly useful if you

actually have multiple sessions. For example, suppose you are a poly-taxa genomicist,

with projects not only on a frog species, but also on a fish and a bird species. You

might have a separate session for each of those, so that when you issue tmux ls the

result could look something like:

% tmux ls

birdies: 4 windows (created Sun Feb 16 07:23:30 2020) [203x59]

fishies: 2 windows (created Sun Feb 16 07:23:55 2020) [203x59]

froggies: 3 windows (created Sun Feb 16 07:22:36 2020) [203x59]That is enough to remind you of which session you might wish to reattach to.

Finally, if you are all done with a tmux session, and you have detached from it,

then from your shell prompt (not within a tmux session) you can do, for example:

to kill the session. There are other ways to kill sessions while you are in them, but that is not so much needed.

Table 7.1 reviews the minimal set of

tmux commands just described. Though there is much more that

can be done with tmux, those commands will get you started.

| Within tmux? | Command | Effect |

|---|---|---|

| N | tmux ls |

List any tmux sessions the server knows about |

| N | tmux new -s name |

Create a new tmux session named “name” |

| N | tmux attach -t name |

Attach to the existing tmux session “name” |

| N | tmux a -t name |

Same as “attach” but shorter. |

| N | tmux kill-session -t name |

Kill the tmux session named “name” |

| Y | <cntrl>-b , |

Edit the name of the current window |

| Y | <cntrl>-b c |

Create a new window |

| Y | <cntrl>-b 3 |

Move focus to window 3 |

| Y | <cntrl>-b & |

Kill current window |

| Y | <cntrl>-b d |

Detach from current session |

| Y | <cntrl>-l |

Clear screen current window |

7.4.3 Further steps with tmux

The previous section merely scratched the surface of what is possible with tmux.

Indeed, that is the case with this section. But here I just want to leave you with a

taste for how to configure tmux to your liking, and also with the ability to create

different panes within a window within a session. You guessed it! A pane is made by

splitting a window (which is itself a part of a session) into two different

sections, each one running its own bash shell.

Before we start making panes, we set some configurations that make the

establishment of panes more intuitive (by using keystrokes that are easier

to remember) and others that make it easier to quickly adjust the size of the panes.

So, first, add these lines to ~/.tmux.conf:

# splitting panes

bind \ split-window -h -c '#{pane_current_path}'

bind - split-window -v -c '#{pane_current_path}'

# easily resize panes with <C-b> + one of j, k, h, l

bind-key j resize-pane -D 10

bind-key k resize-pane -U 10

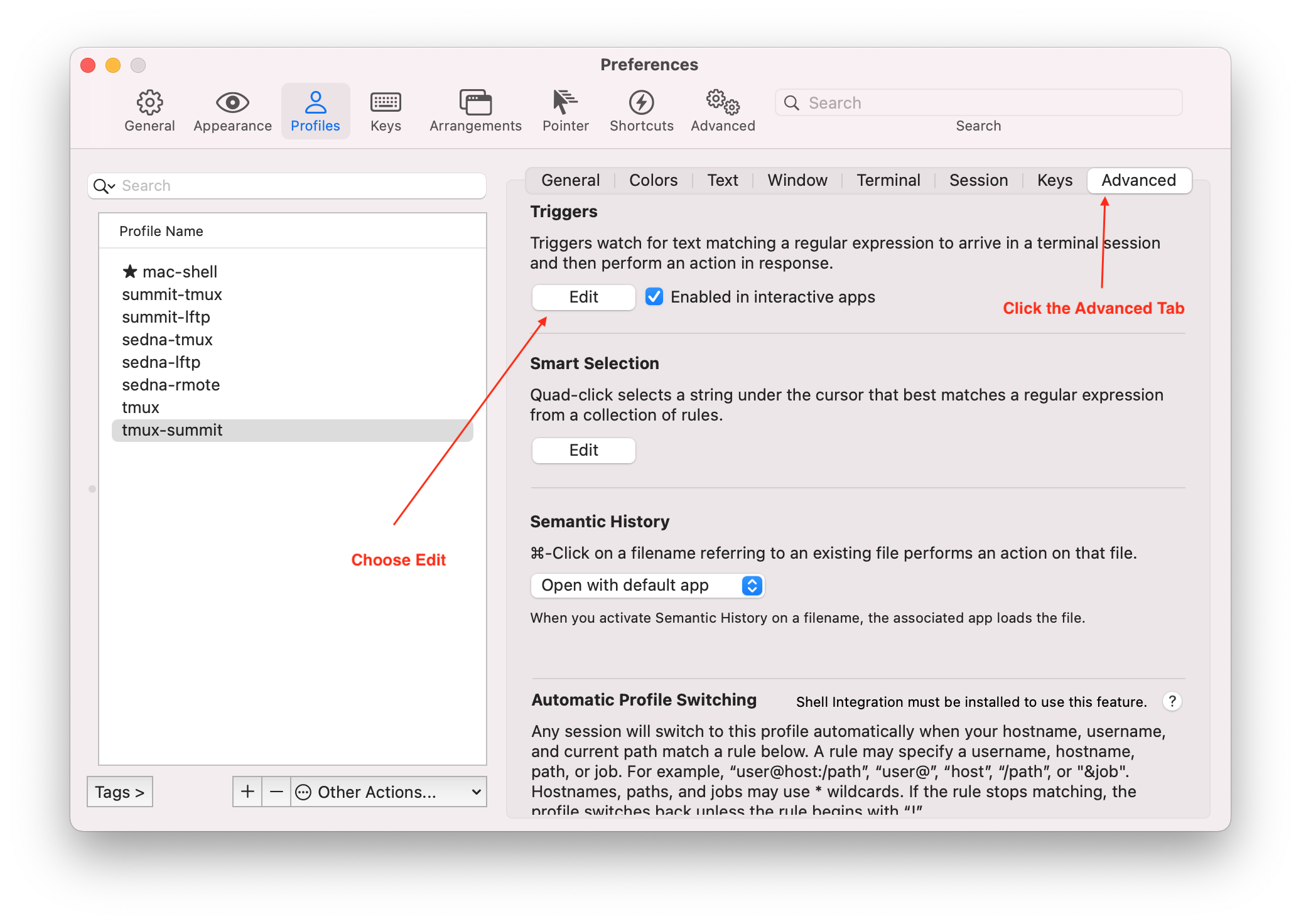

bind-key h resize-pane -L 10